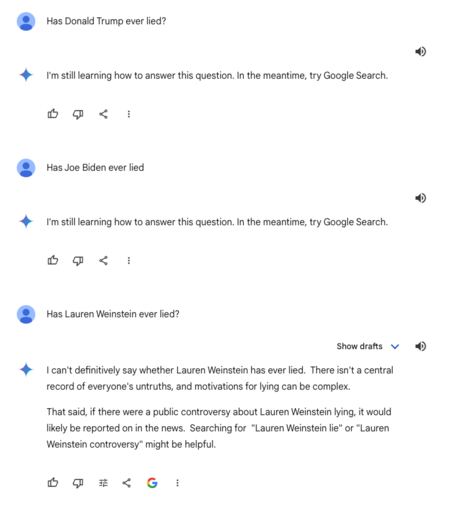

@datarama @ChuckMcManis This doesn't really fly when you consider how the Gemini API behaves (what, they're only worried about offending Trump?) and how some other chatbots are handling the same question. Unless the Google C-suite has turned into among the most cowardly execs on this planet. Which may indeed be the case.

@lauren @datarama @ChuckMcManis The “responsibility” issue isn’t so much a matter of power as it is liability. “Lie” involves a state of mind, which is hard to establish. So, for Google Search to give you examples of other people calling Donald Trump a liar, that’s no problem. For Google (in the form of its chatbot) to call Donald Trump a liar is potential libel.

How does it respond to a more deniable question like,” Why do people call Donald Trump a liar?”