For the nfs shares, there’s generally two approaches to that. First is to mount it on host OS, then map it in to the container. Let’s say the host has the nfs share at /nfs, and the folders you need are at /nfs/homes. You could do “docker run -v /nfs/homes:/homes smtpserverimage” and then those would be available from /homes inside the image.

The second approach is to set up NFS inside the image, and have that connect directly to the nfs server. This is generally seen as a bad idea since it complicates the image and tightly couples the image to a specific configuration. But there are of course exceptions to each rule, so it’s good to keep in mind.

With database servers, you’d have that set up for accepting network connections, and then just give the address and login details in config.

And having a special setup… How special are we talking? If it’s configuration, then that’s handled by env vars and mapping in config files. If it’s specific plugins or compile options… Most built images tend to cast a wide net, and usually have a very “everything included” approach, and instructions / mechanics for adding plugins to the image.

If you can’t find what you’re looking for, you can build your own image. Generally that’s done by basing your Dockerfile on an official image for that software, then do your changes. We can again take the “postgres” image since that’s a fairly well made one that has exactly the easy function for this we’re looking for.

If you would like to do additional initialization in an image derived from this one, add one or more *.sql, *.sql.gz, or *.sh scripts under /docker-entrypoint-initdb.d (creating the directory if necessary). After the entrypoint calls initdb to create the default postgres user and database, it will run any *.sql files, run any executable *.sh scripts, and source any non-executable *.sh scripts found in that directory to do further initialization before starting the service.

So if you have a .sh script that does some extra stuff before the DB starts up, let’s say “mymagicpostgresthings.sh” and you want an image that includes that, based on Postgresql 16, you could make this Dockerfile in the same folder as that file:

FROM postgres:16

RUN mkdir /docker-entrypoint-initdb.d

COPY mymagicpostgresthings.sh /docker-entrypoint-initdb.d/mymagicpostgresthings.sh

RUN chmod a+x /docker-entrypoint-initdb.d/mymagicpostgresthings.sh

and when you run “docker build . -t mymagicpostgres” in that folder, it will build that image with your file included, and call it “mymagicpostgres” - which you can run by doing “docker run -e POSTGRES_PASSWORD=mysecretpassword -p 5432:5432 mymagicpostgres”

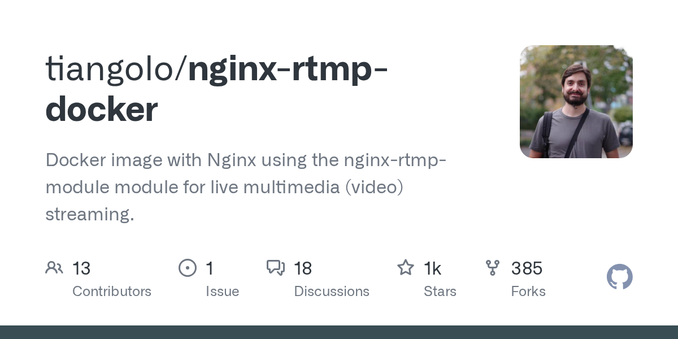

In some cases you need a more complex approach. For example I have an nginx streaming server - which needs extra patches. I found this repository for just that, and if you look at it’s Dockerfile you can see each step it’s doing. I needed a bit of modifications to that, so I have my own copy with different nginx.conf, an extra patch it downloads and applies to the src code, and a startup script that changes some settings from env vars, but that had 90% of the work done.

So depending on how big changes you need, you might have to recreate from scratch or you can piggyback on what’s already made. And for “docker script to launch it” that’s usually a docker-compose.yml file. Here’s a postgres example:

version: '3.1'

services:

db:

image: postgres

restart: always

environment:

POSTGRES_PASSWORD: example

adminer:

image: adminer

restart: always

ports:

- 8080:8080

If you run “docker compose up -d” in that file’s folder it will cause docker to download and start up the images for postgres and adminer, and port forward in 8080 to adminer. From adminer’s point of view, the postgres server is available as “db”. And since both have “restart: always” if one of them crashes or the machine reboots, docker will start them up again. So that will continue running until you run “docker compose down” or something catastrophic happens.