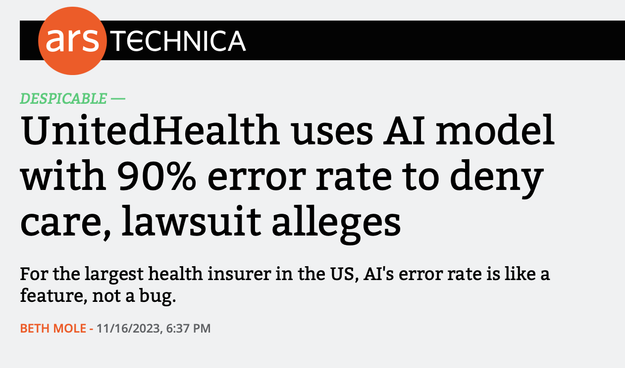

When we warn the real threat of AI is how it’s used against people in the present, not the fantasies that some day computers might think for themselves, this is exactly the kind of thing we’re talking about: health insurers using AI to deny care.

@Obdurodon @parismarx

Companies have been pretending like nobody is personally responsible for shitty behavior for a long time by saying "it's just policy." However, people have always known that, at some level, people wrote those policies.

Much more recently, it's gotten worse when they can say "it's just the algorithm." Sure, people wrote the algorithms, but they're much more arcane than a written document.

LLMs are going to escalate this even further.

@jargoggles @Obdurodon @parismarx this this this.

AI will be yet another scapegoat for companies to continue to make worse and worse decisions. there is ALWAYS someone behind the machine, enacting the decisions and/or allowing the AI's rulings to be honored. therefore, it is still ultimately a decision made by humans. sickening that our most powerful technology to date is already being used to continually reinforce rotten power structures and systems...