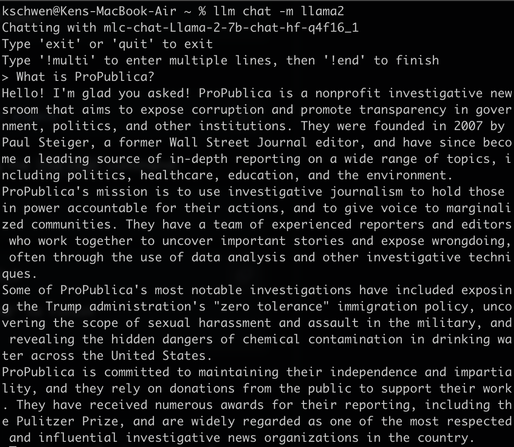

I installed a locally hosted LLM using @simon's excellent `llm` (https://github.com/simonw/llm) tool. It's kind of wild that I just...have this power on my laptop?

@ken I'm constantly amazed at how much information is compressed into that ~13GB Llama 2 7B model

(It hallucinates wildly too, but still very impressive)