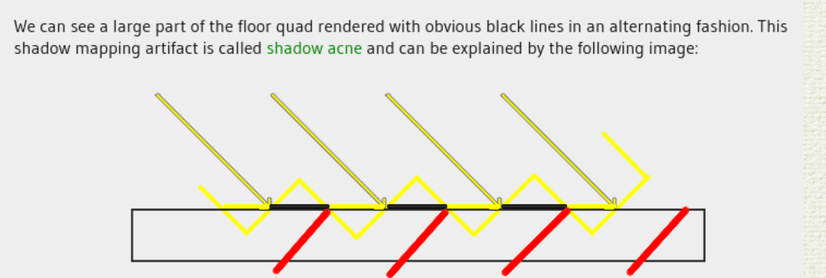

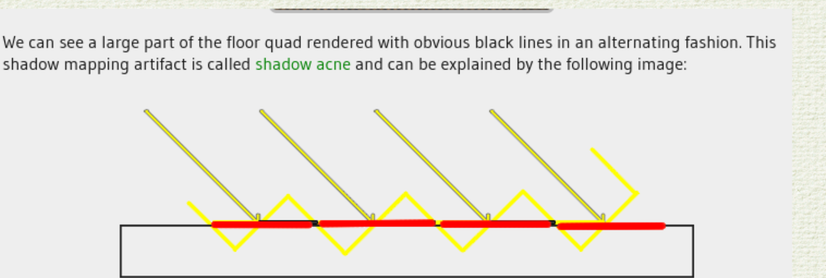

mh. stairstep effects in in shadow mapping are just a symptom of inaccurate z-buffer rasterization. the z-value chosen per pixel should be the maximum depth of the intersection of frustum pixel and triangle, not the average or center value. then the stairstep effects will vanish. applying a bias instead is a terrible solution.

you can see below how the error is caused by taking the midpoint as depth; if the max depth were taken (red lines) we'd have no issue.