Controlled experiment finds no detectable citation bump from Twitter promotion https://www.biorxiv.org/content/10.1101/2023.09.17.558161v1 Good that article choice and tweet timing were randomized. In observational setting, it is likely that people tweet about the most interesting papers, which are also likely to perform better on attention metrics (citations, Altmetrics etc.).

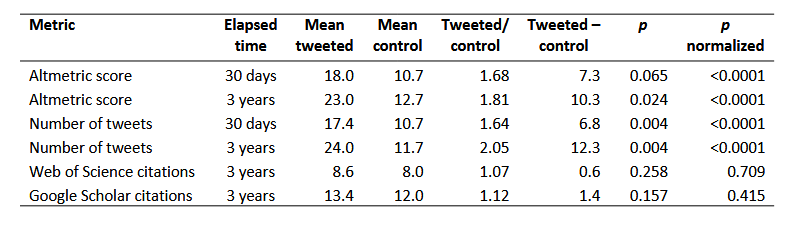

- Altmetrics and tweets are boosted.

- Citations higher after three years, but w/o statistical significance.

Three thoughts on this: 1/

@academicchatter

- Altmetrics and tweets are boosted.

- Citations higher after three years, but w/o statistical significance.

Three thoughts on this: 1/

@academicchatter