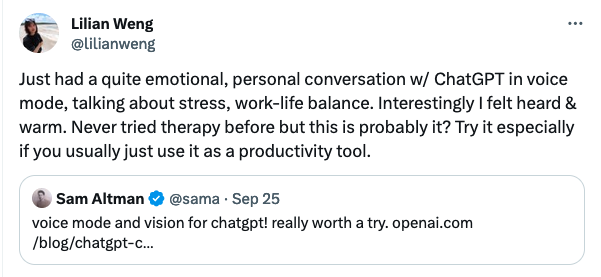

So the "AI Safety" people at the chatbot company will not be the ones who think about, I don't know, the ELIZA chatbot from 60 years ago and the issues that arose let alone with this one?

@timnitGebru someone in a discord I'm in recently shared the output they got from what is clearly a wrapper on an LLM (a la ELI5 but more options; "explain like X") where they prompted it for "the most intuitive" description of BPD and felt like its output was super validating and other people chimed in agreeing that's it's fair/realistic/accurate/affirming. I'm not sure if they realized it's an LLM (surely they must? but?), and I don't want to yuck their yum, but 😓