Apple's statement is the death knell for the idea that it's possible to scan everyone's comms AND preserve privacy.

Apple has many of the best cryptographers + software eng on earth + infinite $.

If they can't, no one can. (They can't. No one can.)

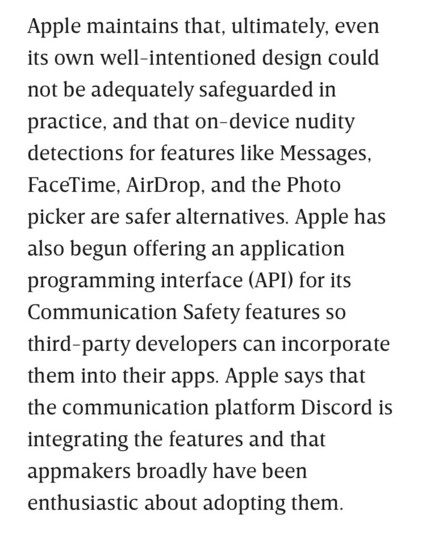

https://www.wired.com/story/apple-csam-scanning-heat-initiative-letter/