Why? This is just pointless sabotage.

And...any decent AI training model already has to filter out garbage so would be unaffected.

What are you trying to accomplish?

AI models will provide huge benefits. (They already are). Why not focus on the real problems, which are the same old enemies of capitalism and corrupt governance?

@sean @anildash so-called "AI" could possibly provide huge benefits if the gains were for the public, but as with almost all capitalist endeavors, the gains are concentrated in the hands of the very wealthy. And remember, the ultimate goal of the very wealthy is simply to do away with human workers, because robots are less troublesome than humans demanding rights, etc. I think the reaction against AI is at least partially from a growing realization of this fact.

Also, AI is already causing real harms, not just theoretical. I mean things like racially biased facial recognition systems, people's reputations being destroyed because of false ChatGPT results, etc. People shouldn't rely on results from AI, but they do, and the AI systems are certainly marketed as being reliable.

So you admit the problem is capitalism and governance, not AI itself.

We should WANT to do away with human labor. Fully automated luxury communism isn't a pipe dream anymore. The threat is that the wealthy will simply buy machines to make everything, fire human workers and leave them to starve. But then who will they be making products and services FOR?

Eventually it's going to have to come around to paying people for not working, because otherwise no one will be able to buy the work product of the machines. And there will be no purpose for running the machines. And nothing to keep making money for the wealthy.

This is a political, not technological problem and same as it ever was, it still all goes back to politics and the need to control wealth.

@sean @anildash the problem is both capitalism and the development of any technology that furthers wealth/power disparity. I already listed some specific problems with AI today. Blindly plowing ahead with all tech, without regard to the current power structures, can just concentrate power even further.

Sure, I'd love to provide all humans with a life of leisure, if that were the direction we're going. But it's not. Once all work is automated, the controlling class won't need anyone to sell anything to, or have any other need for anyone else. That's how they seem to see it, anyway. I think such a view is mistaken, for one thing since the tech they rely on will inevitably have problems that need to be fixed by capable workers, but I really get the sense they're shooting for automating everything so they don't have to deal with human workers. Sure, they could share the leisurely life with everyone (by letting them survive), but they have no incentive to, and have no past record of doing so.

It's the desert island problem: You can't ever build wealth without workers to hire. And once you build wealth it does you no good without workers to hire.

I agree wealth is a HUGE political problem. But trying to suppress technology won't solve it. Someone is going to create this tech, no matter how many people oppose it.

So we're back where we started, solving the political problem of oligarchy.

@sean @jamesmarshall @anildash I don't think it will necessarily lead to UBI - I think the most probable end of climate change + automation is a global Brasil: a comfortable upper class that still has access to all amenities despite the climate and a destitute, surveilled and controlled lower class, with tiny shreds of what was considered middle class

if there is any UBI it's only there to quell bread riots, might just be bread instead of money

@sean @anildash I really don’t think protecting your work from misuse constitutes “sabotage.” We don’t have a collective responsibility to ensure someone’s plagiarism-machine is receiving top-quality data. If they want good data, they should *ask* to use people’s material. Being online is not de-facto consent to any and all appropriation, and “you didn’t say I couldn’t” to morally-questionable unforeseen usage is not legitimate imo.

Also “blacksun”? What’s up with that..?

If AI training is "plagiarism," then so is any research, or use of online data as reference material.

I think it's tough for people to wrap their heads around the fact that language models and generative art are actually writing and painting--creating original work.

I've made this argument repeatedly, and people seem to continue to mischaracterize what AI is doing as mere copying.

Authors and artists continually use prior work as reference. AI is doing the same thing humans have done since the beginning of civilization.

The whole thing is a misdirection. The left has failed to successfully take on capitalistic oligarchy. So now people are focused on the proximate cause of technology as a scapegoat, while ignoring the ultimate cause of our troubles-- the worldwide breakdown of Democratic governance and the rise of a new feudal class of billionaires.

@anildash once a these find data, there's no way to keep them using it in the agreed way.

Data poisoners should be everywhere.

My favourite is AdNauseam. It clicks ALL the ads it sees, poisoning the meaning of ad data collection, and costing advertisers $.

@anildash Yea, but now you have bad actors poisoning the well with even more propaganda and fake news, and companies determined to use these AI services so humanity will continue to slide ever closer to oblivion.

Blocking is much better for the environment and humanity

@anildash This is cool but at a single site level it will probably only accelerate the emergence of AIs smart enough to recognise your chosen level of garbage.

However, coordinated community level synchronised garbage could be used to thoroughly poison the training potential of data hoovered up via random crawling.

We should feed them random words with the suffix "porn" and destroy Googles empire.

Mwahahahah!

Just add random word + porn to all the fields of the meta tags with Head.

@inthehands @anildash @misc For the first part, does AdNauseum fit the bill?

@anildash

I just got my data dump from Reddit. My next step will be to replace all my comments with random bits of ChatGPT output.

That is not just garbage; it's garbage that we know disrupts training in a real way.

@anildash I see a possible Wordpress plug-in as a start.

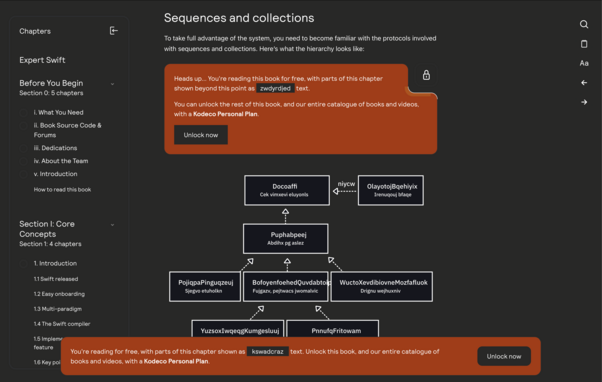

1) relatively hidden links (like those used in honeypots)

2) links point to garbage pages. Maybe the garbage was even written by a LLM designed to write obviously garbage text.

3) the plug-in creates numerous backdated posts and pages, not easy for a human to see or navigate to, that are easily scraped by bots

4) no SEO on the garbage pages, to reduce the chances of search engines finding them.