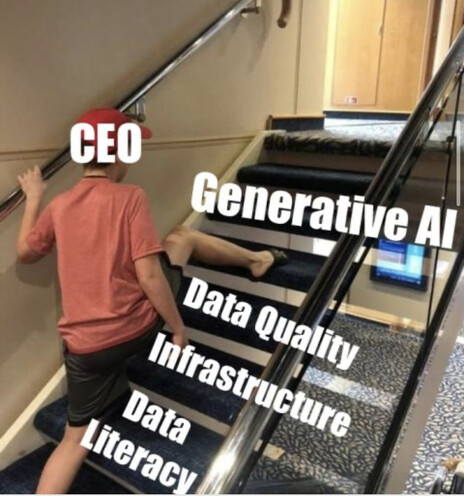

Management “visionary”

@histoftech Speaking of which, did you hear about Mozilla's leadership pushing "AI" into the MDN technical documentation and producing flat-out wrong answers when it's used to describe html/css code and deliberately being disingenuous in response to criticism? https://github.com/mdn/yari/issues/9208

MDN can now automatically lie to people seeking technical information · Issue #9208 · mdn/yari

Summary MDN's new "ai explain" button on code blocks generates human-like text that may be correct by happenstance, or may contain convincing falsehoods. this is a strange decision for a technical ...

@ashteranic @histoftech I just saw they “fixed” it: https://github.com/mdn/yari/pull/9215

That’s like when a lion escapes its cage at the zoo and they just put up a sign at that entrance saying “Caution, loose lion” instead of closing the zoo until the lion is back in its cage.

fix(ai-help): add short and extended explanatory guidance by LeoMcA · Pull Request #9215 · mdn/yari

Summary #9214 #9208 Problem We don't explain that outputs from AI Help are not guaranteed to be accurate, and that the links to the docs used to generate the prompt exist to validate the response...

@histoftech Per @TonyJWells "If you say AI LLM five times in front of a mirror, Chatgptman appears and kills your revenue stream."

@histoftech wish I could boost this twice

OMG this is amazing I wish I could boost it once for each skipped step

@histoftech Not sure if this is a joke or warning.

@histoftech ... Oder LinkedIn Timeline.