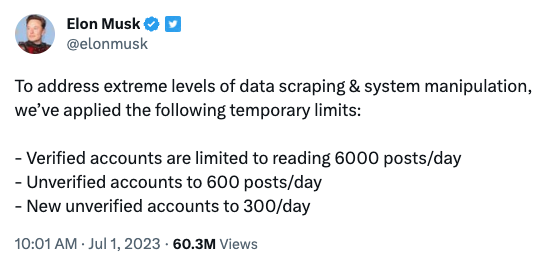

For the last two days, Elon Musk has been publicly freaking out about "EXTREME levels of data scraping," so added "temporary emergency measures" like blocking logged-out views and adding tight rate limits on viewing tweets. But, apparently noticed first here by @sysop408, a Javascript bug in the Twitter web app is self-DDOSing their servers, sending an endless loop of requests — which seems related to their scraping panic. https://waxy.org/2023/07/twitter-bug-causes-self-ddos-possibly-causing-elon-musks-emergency-blocks-and-rate-limits-its-amateur-hour/

Twitter bug causes self-DDOS tied to Elon Musk's emergency blocks and rate limits: "It's amateur hour" - Waxy.org

An "amateur hour" Javascript bug is self-DDOSing Twitter, sending infinite requests from users related to — or possibly even causing — Elon Musk's "temporary emergency measures" to stop web scraping.