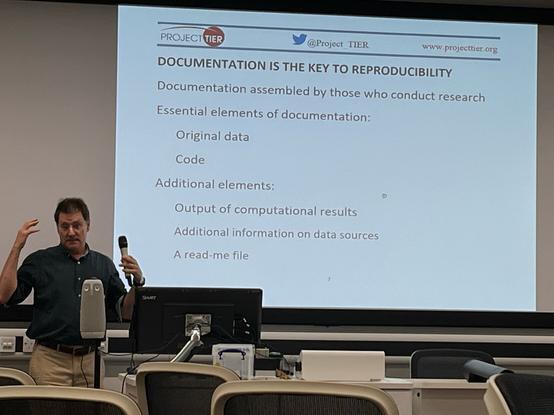

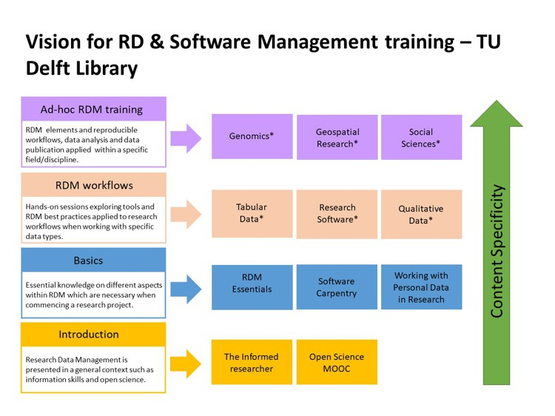

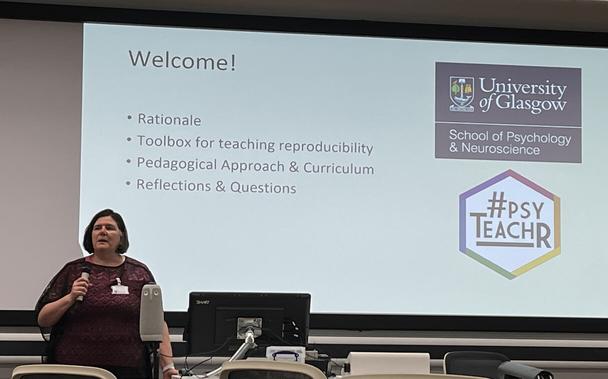

I’m at the University of Sheffield today for the Perspectives on Teaching Reproducibility symposium at the Teaching Reproducible Research and Open Science conference.

https://www.sheffield.ac.uk/smi/events/teaching-reproducible-research-and-open-science-conference

I’ll add links and notes about the day in this thread #OpenResearch #reproducibility #psyTeachR