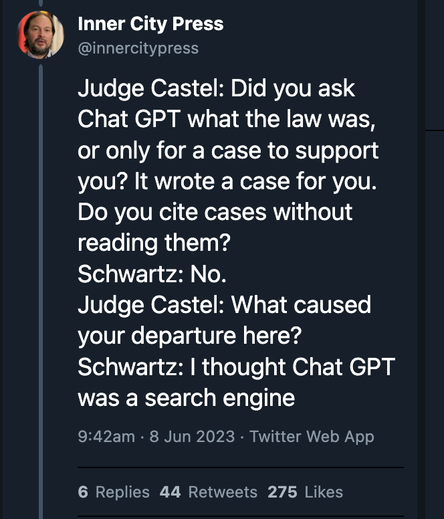

From a live tweet of the proceedings around the lawyer caught using ChatGPT:

"I thought ChatGPT was a search engine".

It is NOT a search engine. Nor, by the way are the version of it included in Bing or Google's Bard.

Language model-driven chatbots are not suitable for information access.

>>