Ironically through playing with #ChatGPT, I recently learned of a recent branch of philosophy named "The Philosophy of Action". I didn't realize that this is what I've been noodling for a long time ever since reading Dennett's "Elbow Room".

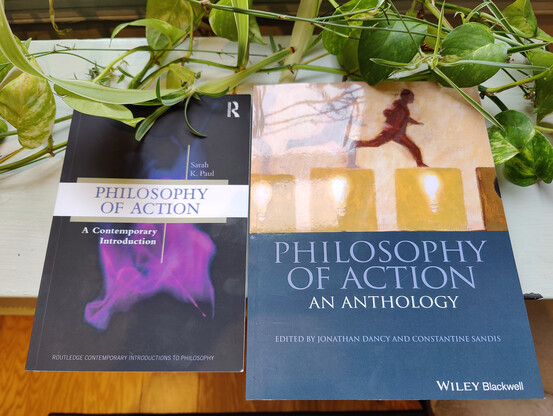

Here are two books I've picked up to learn more - "Philosophy of Action, A Contemporary Introduction" by Sarah K Paul, and "Philosophy of Action, An Anthology" edited by Dancy and Sandis.