@jasongorman @thirstybear Well, I have worked with "developers" that needed the comments because they somehow were such great developers that they have issues reading simple statements.

But yes, perhaps we should not try to orientate us by the worst cases of commercial software engineering that we've seen. That can cause depression.

@jasongorman @thirstybear

This thought example about the Thai national library (as training data, without books with non-Thai text or images) illustrates it perfectly:

Basically no chance that you'll learn Thai, the language, the meaning from the dead books, even the massive corpus will not help.

You might learn how a reasonable Thai text look.

So if somebody submits some Thai text to you (say a question, but you wouldn't know, obviously, you know how Thai looks, but you have no idea what it means), you might be able to continue it.

But not understand if you just answered, “sure, one big fries coming up with your burger, sir” or “sure I did murder that family, I confess”.

So the idea that a LLM is capable of explaining your code to you, anthropomorphizing at its worst.

No it's not your coding buddy.

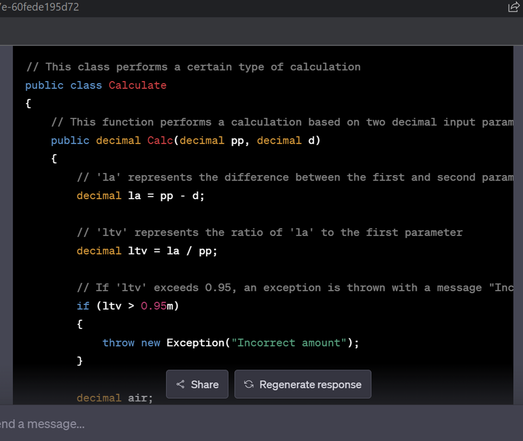

@julian It's actually one of the refuctorings in my Waterfall 2006 presentation: "Stating The Bleeding Obvious"

ChatGPT explains exactly zilch...as expected.

I saw that technically all observations were correct, but none of them are helpful. If I'd ask real intelligence (a.k.a. a person), they'd (hopefully) explain the function in more broad strokes and go deeper and deeper until I understand what I need. 🙂

@jasongorman

Ok, repeat slowly after me:

LLM DO NOT UNDERSTAND ANYTHING.

LLM predict (based on their training data) what's the most likely next token, that would also happen in the training data.

(Yes you can express what ChatGPT does in formal Statistics formula, but then somehow about 98% of humanity runs crying for help when you show them.)