We are really excited to share that we have just released the alpha version of Prodigy v1.12! This includes LLM-assisted workflows for data annotation and prompt engineering as well as extended, fully customizable support for multi-annotator workflows.

Prodigy 1.12 alpha release: LLM-assisted workflows, prompt engineering & fully custom task routing for multi-annotator scenarios.

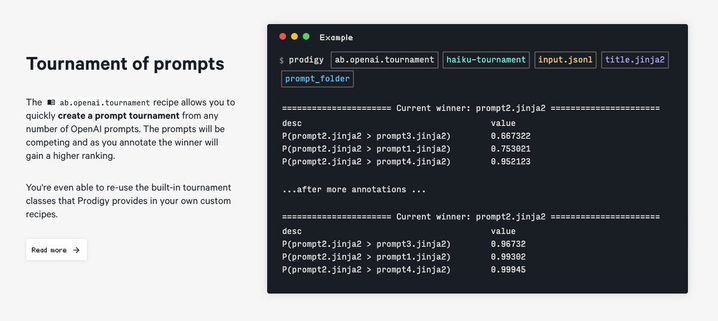

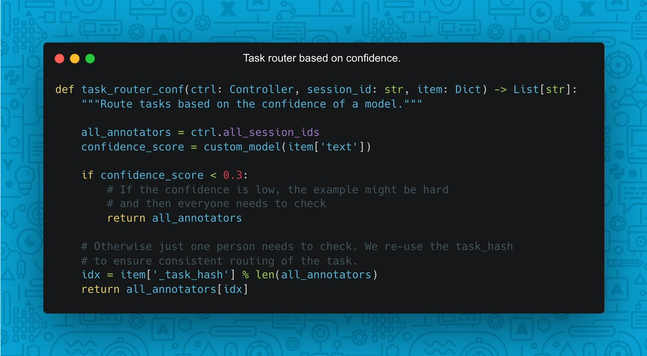

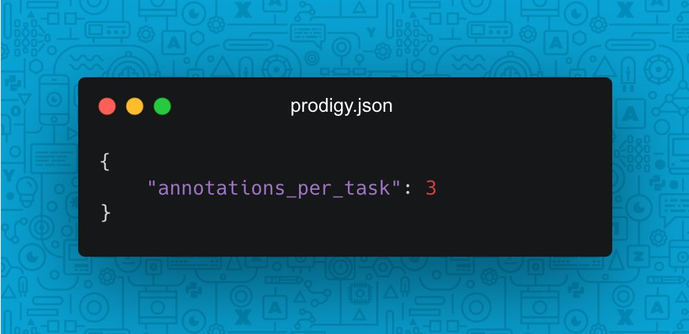

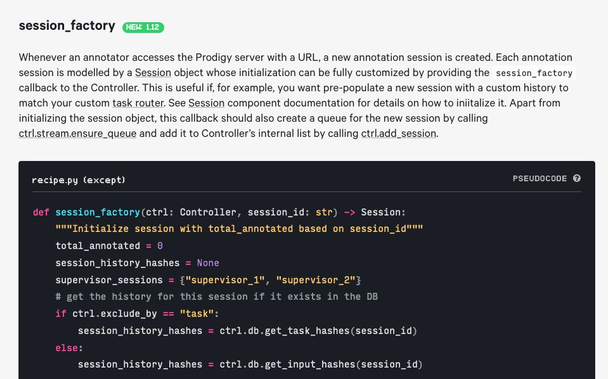

Hey everyone! We are really excited to share that we have just released the alpha version of Prodigy v1.12! (v1.12a1). This release is available for download for all v.1.11.x license holders and includes: New recipes for LLM-assisted annotations and prompt engineering: the LLM assisted workflows we have announced a while ago are now fully integrated with Prodigy and available out of the box. For v1.12a1 you'd still be restricted to OpenAI API to use them, but by v1.12a2 we definitely want to...