@paninid @astro_jcm @rysiek I already run local LLMs and am working on interfacing Audio-to-text ;) This will soon be here. Free. Opensource.

Will not likely be me that manages to put something together - but all the tech is here and omg we are many that need to just get the actual content out of these 8 min+ (needed for monetization) Youtube videos ...

@troed @paninid @astro_jcm @rysiek

Any advice or resources on building local LLMs? I'm working on a personal project of a local LLM and am always looking for ideas.

@faberfedor @paninid @astro_jcm @rysiek Well building if you mean training from scratch is way out of consumer hw league still, but just using one of the LLMs through Oobabooga works fine. I have a 12GB VRAM GPU and have used various GPTQ 13b models.

I run a local chatbot for the family matrix channel that way: https://blog.troed.se/2023/03/19/create-your-own-locally-hosted-family-ai-assistant/

What I'm working on now is which audio2text product to interface this with. For text2audio I already use Tortoise.TTS.

So the thinking here is that when I have the audio2text up I can then pipe that through a model with a large enough context window and simply ask the LLM for a summary. In chat mode, this could then also be probed with detailed questions (keeping the same context).

I've had realtime usecases in mind mostly, but that's not needed here, which means there are probably quite a few audio2text projects to go back to.

> one of the LLMs through Oobabooga

That's a(nother) new one to me.

My idea is to train in the cloud, fine-tune it (locally?) and host it locally. The fine-tuning is going to be more personal data: notes, tweets, emails, etc. How to do updates, OTOH...<shrug>

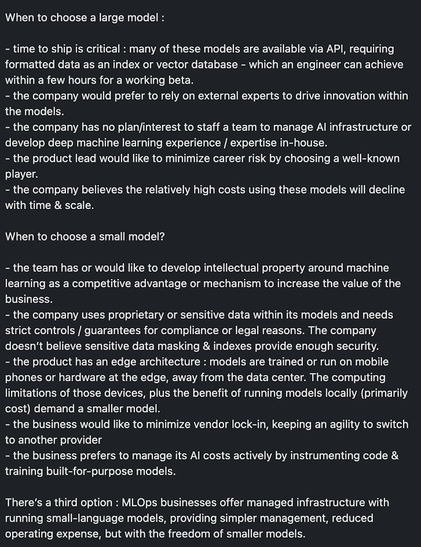

ATM I'm building an MLOps pipeline just 'cuz. I hadn't thought about an agent UI since my initial goal didn't require a UI.

Thanks for the blog post. It'll give me something to do while my bread bakes. 🙂

Thanks for that. Looks like a decent way to differentiate customers for my freelance business.