The most popular Arxiv link yesterday (via srush_nlp@twitter):

Pretraining without Attention (https://t.co/Y2P32MheXc) - BiGS is alternative to BERT trained on up to 4096 tokens.

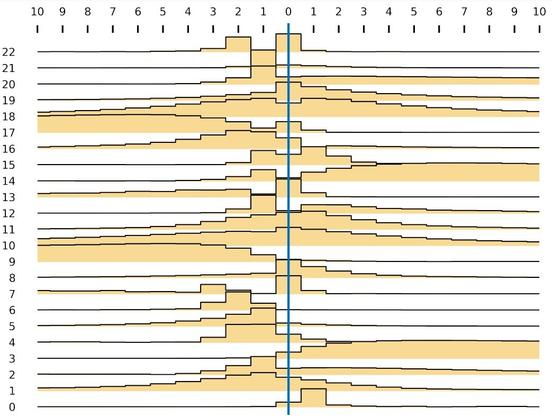

Attention can be overkill. Below shows *every* word-word interaction for every sentence over 23 layers of BiGS (no heads, no n^2). https://t.co/bZVkaXH2r7

Pretraining Without Attention

Transformers have been essential to pretraining success in NLP. While other architectures have been used, downstream accuracy is either significantly worse, or requires attention layers to match standard benchmarks such as GLUE. This work explores pretraining without attention by using recent advances in sequence routing based on state-space models (SSMs). Our proposed model, Bidirectional Gated SSM (BiGS), combines SSM layers with a multiplicative gating architecture that has been effective in simplified sequence modeling architectures. The model learns static layers that do not consider pair-wise interactions. Even so, BiGS is able to match BERT pretraining accuracy on GLUE and can be extended to long-form pretraining of 4096 tokens without approximation. Analysis shows that while the models have similar average accuracy, the approach has different inductive biases than BERT in terms of interactions and syntactic representations. All models from this work are available at https://github.com/jxiw/BiGS.