Fucking Christ the @protocol is the most obtuse crock of shit I've ever looked at. It is complex solely for the sake of being complex and still suffers from *all* of the same problems as Mastodon.

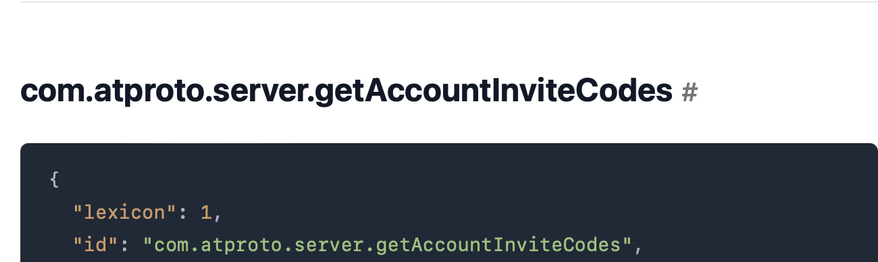

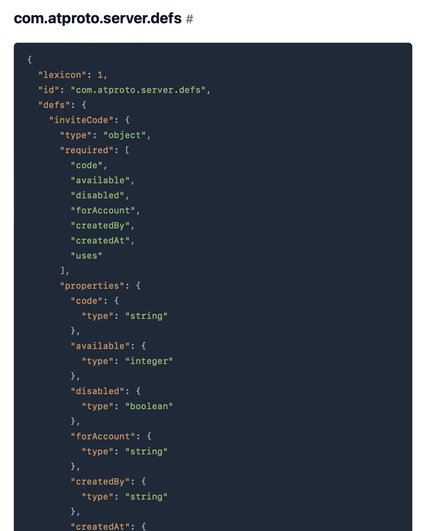

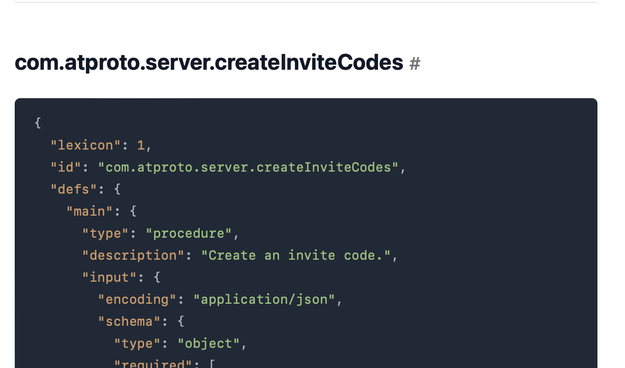

Your server goes down? Sorry, all of your followers are lost. Account portability is no better than Mastodon. 'DIDs' serve literally no purpose. And none of the API code that Bluesky uses in their own app validates ANY of the crypto they're doing on the server. NONE OF IT.