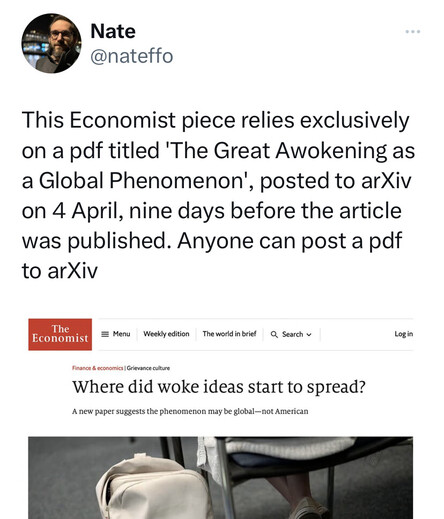

People jump to blame bad things on #preprints (eg @nateffo) on twitter, but the reasoning is weak. By what reasoning would this kind of bad journalism have been prevented by stricter screening at arXiv? /1

First, of course, it's false that "anyone can post a pdf to arXiv." So, score one for trustworthy reporting. (Heck, anyone can bash preprints on Twitter) /2

Second, the author of the preprint is a nobody academic (no offense) with a few conservetive op-eds and some computer science papers. If a journalist is going to trust them as their only source, that's on the journalist. It's not like arXiv is the only way to distribute PDFs /3

In general, when analyzing trustworthiness in the information ecosystem, a lot of people fail to employ sound methods, ignore counterfactuals, and make motivated causal arguments in support of the hierarchical status quo /4

Instead of blaming preprints,

in this case @nateffo could just have logically blamed graduate education (he has a PhD and did a postdoc) or the tenure system (he is an associate professor). Or blame the Economist, which uses prestige to press a political agenda. These are all signals of trustworthiness. Blaming pre-prints in this case is not justified (but good for academic clicks) /5

in this case @nateffo could just have logically blamed graduate education (he has a PhD and did a postdoc) or the tenure system (he is an associate professor). Or blame the Economist, which uses prestige to press a political agenda. These are all signals of trustworthiness. Blaming pre-prints in this case is not justified (but good for academic clicks) /5

Academics make a big mistake thinking our job is to prevent the dissemination of bad things. We have no power to do that. Our job is to create and share knowledge, which includes certification or endorsement (degrees, peer review, etc). We can gatekeep certification, not dissemination.

Journals are partly to blame for the tendency to conflate peer review with publishing. It is reasonable and good to say about bad work, "over my dead body will this be in an important journal!" But pointless to say, "over my dead body will this PDF be on the internet!"