"Learning to Be Fair: A Consequentialist Approach to Equitable Decision-Making"

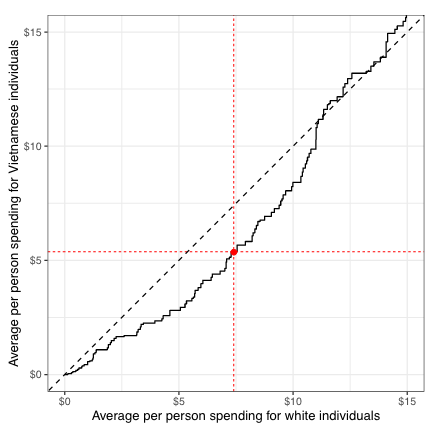

While designing fair machine learning systems an approach could be to ensure parity in error rates across race, gender etc. It turns out to really matter how we define this statistically: some strategies might sound fair but ignore downstream effects and can cause unexpected harm to the very groups we try to protect.

Learning to be Fair: A Consequentialist Approach to Equitable Decision-Making

In an attempt to make algorithms fair, the machine learning literature has largely focused on equalizing decisions, outcomes, or error rates across race or gender groups. To illustrate, consider a hypothetical government rideshare program that provides transportation assistance to low-income people with upcoming court dates. Following this literature, one might allocate rides to those with the highest estimated treatment effect per dollar, while constraining spending to be equal across race groups. That approach, however, ignores the downstream consequences of such constraints, and, as a result, can induce unexpected harms. For instance, if one demographic group lives farther from court, enforcing equal spending would necessarily mean fewer total rides provided, and potentially more people penalized for missing court. Here we present an alternative framework for designing equitable algorithms that foregrounds the consequences of decisions. In our approach, one first elicits stakeholder preferences over the space of possible decisions and the resulting outcomes--such as preferences for balancing spending parity against court appearance rates. We then optimize over the space of decision policies, making trade-offs in a way that maximizes the elicited utility. To do so, we develop an algorithm for efficiently learning these optimal policies from data for a large family of expressive utility functions. In particular, we use a contextual bandit algorithm to explore the space of policies while solving a convex optimization problem at each step to estimate the best policy based on the available information. This consequentialist paradigm facilitates a more holistic approach to equitable decision-making.