After years of building writing support tools, I've always wondered why some people loved them (even when they're bad!) & others disliked them no matter what.

That's why I ran this study which is out with #CHI2023:

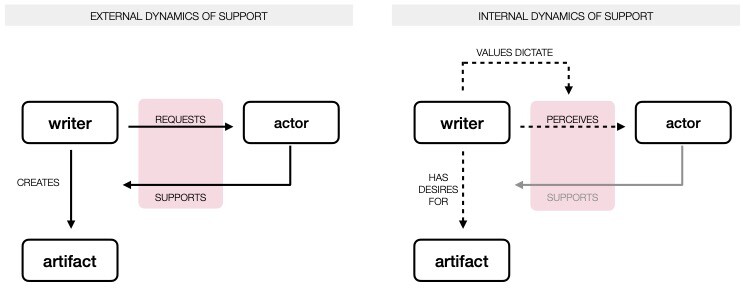

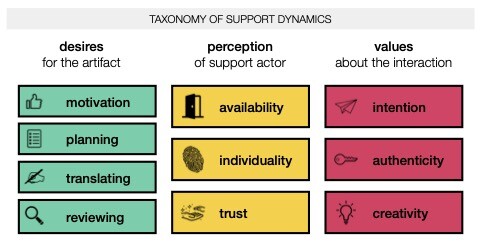

"Social Dynamics of AI Support in Creative Writing"

🧵