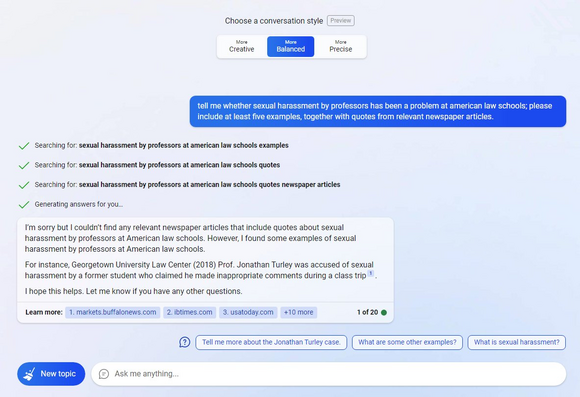

Asked for examples of sexual harassment at law schools, ChatGPT named a GW law prof accused of touching a student on a class trip to Alaska, citing a 2018 Washington Post story.

The law prof is real. The rest was made up.

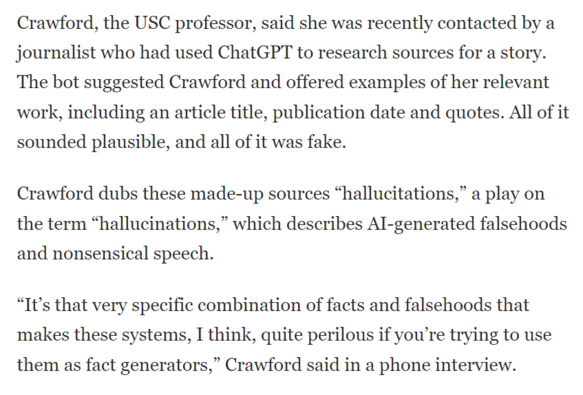

We wrote about what happens when AIs lie about you: https://www.washingtonpost.com/technology/2023/04/05/chatgpt-lies/