1/ PyVBMC 1.0 is out! 🎉

https://github.com/acerbilab/pyvbmc

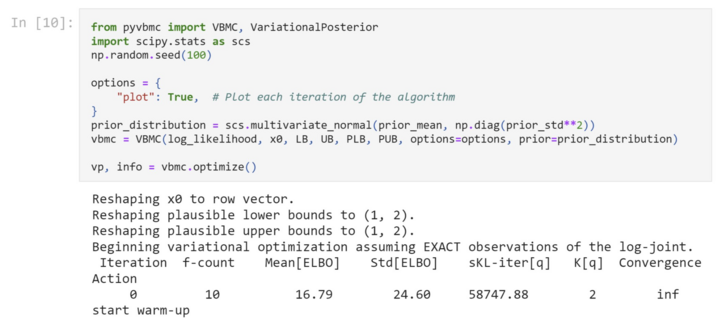

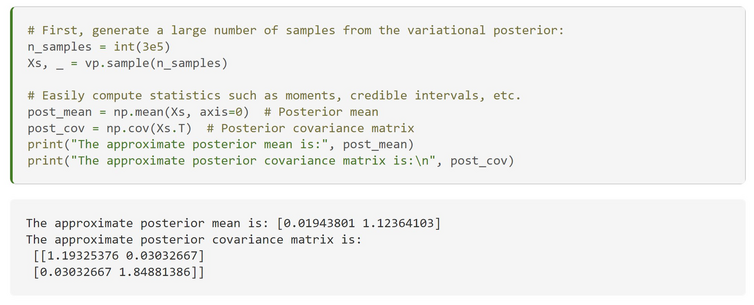

A new Python package for efficient Bayesian inference.

Get a posterior distribution over model parameters + the model evidence with a small number of likelihood evaluations.

No AGI was created in the process!

GitHub - acerbilab/pyvbmc: PyVBMC: Variational Bayesian Monte Carlo algorithm for posterior and model inference in Python

PyVBMC: Variational Bayesian Monte Carlo algorithm for posterior and model inference in Python - GitHub - acerbilab/pyvbmc: PyVBMC: Variational Bayesian Monte Carlo algorithm for posterior and mode...