I was heartened yesterday that the Supreme Court is grasping for a more sophisticated vocabulary for how recommendation algorithms work. We’re overdue for a thoughtful reckoning about the interplay between algorithmic ranking and the surges of user activity it purports to measure, and how that interplay shapes the public sphere. But the question for the Supreme Court isn’t that; it’s whether a key division of responsibility enshrined in Section 230 should persist. Some thoughts… [1/22]

Section 230 was not designed to make platforms “neutral.” It was designed to distinguish the responsibility we demand of someone who posts harmful content, from the responsibility we assign to the platform hosting it. If a video is defamatory, obscene, threatening, 230 says we should focus responsibility on the person posting it; but we should not let that interfere with the responsibilities of platforms: host content, deliver content to users, and moderate that content as they see fit. [2/22]

This division of responsibility rubs some people the wrong way, especially as the nature and scope of these harms grow. It does remove one way of forcing platforms to moderate with greater care. It also rubs some people the wrong way because a few of these platforms have grown enormously profitable and powerful, in part by downplaying those harms to their users and advertisers and pawning the caustic labor of moderation off on the least protected workers. [3/22]

This division of responsibility rubs some people the wrong way, especially as the nature and scope of these harms grow. It does remove one way of forcing platforms to moderate with greater care. It also rubs some people the wrong way because a few of these platforms have grown enormously profitable and powerful, in part by downplaying those harms to their users and advertisers and pawning the caustic labor of moderation off on the least protected workers. [3/22]

And the fact that a key part of what platforms and information services do is *recommend* content, not just host it, raises the question of whether limiting accountability to only the offending user makes sense any more. Which does the greater harm: the user who posted the ISIS propaganda video to YouTube, or the algorithm that offered it up to dozens of users, or hundreds, or thousands? [4/22]

Should the division of responsibility enshrined in Section 230 persist? If, or I suppose when, this becomes a question for Congress, the answer is a messy maybe. But for the Supreme Court, who must interpret the law in order to either uphold it or deem it either unconstitutional or inapplicable, the answer is yes. [5/22]

The law says we do not hold a platform accountable for what a user posts to it. Hosting content means making it available; this includes returning it among search results, listing it as a recommendation, delivering it to the user who might want it. And if 230 ensures that platforms may also engage in content moderation, so long as it is in good faith, it should also allow them to recommend content — perhaps, in the spirit of the law, so long as they recommend in good faith. [6/22]

What’s so different about algorithmic recommendation as compared to the “interactive computer services” that the law protected in 1996? One claim is about impact. Powerful algorithms can deliver the offending video to more users. But any design element of an information archive will deliver some content more than others. Even alphabetical ends up privileging some content over others, as demonstrated by the presence of AAA Dry Cleaners in the yellow pages. [7/22]

The first site listed, the example loaded on the front page, the newest post, the advertiser who pays more for greater visibility: these are all mechanisms that pick and choose some content over others, by some criteria, for some reason. To wish that the design of recommendation algorithms were unmotivated is naive. But just because algorithms have priorities doesn’t mean that they’re rigged. [8/22]

A second claim is that purposeful recommendation is tantamount to prior knowledge. This is equally absurd, and ignorant of how machine learning works. YouTube designed its algorithm to predict what you will most want to watch. This does mean knowing all sorts of things: which videos you have already watched, which users have similar viewing patterns as you, which videos have been most often played together. [9/22]

But this does not care about what the actual content of the video is. We do need to grapple with the secondary effects of the information flows created by algorithmic mechanisms in the aggregate; but part of what’s challenging is precisely that algorithmic recommendation systems have these effects without having ever evaluated the content as such. [10/22]

(part 2) When Section 230 protections do come before Congress again, we will have to ask a different question: not only has the technology changed since 1996, but so have the civic goals and priorities that justified it in the first place. [11/22]

At the time, Congress wanted to ensure that young, innovative information services wouldn’t be squelched by the threat of lawsuits. (And, it’s worth remembering, they also wanted to curb obscenity on the internet, the concern that drove the larger Congressional act of which 230 is only a section - the Communication Decency Act, which was soon ruled unconstitutional. It wasn’t entirely about protecting innovative startups.) [12/22]

Today, the question is, what to do with massive platforms whose designs, including the recommendation algorithms, do have cumulative effects? The criteria built into these algorithms, even if they aren’t nefarious, are consequential. The harms - not so much the harms of one person seeing one video, but the aggregate harms of some kinds of content getting more public visibility and others less, have implications for the democratic process and an informed citizenry. [13/22]

It’s been long enough to see that the market has not driven these platforms towards a more rewarding or verdant mix of information -- quite the opposite. So it’s reasonable to suggest that these algorithmic criteria and their effects should be more open to public and regulatory scrutiny, so that we can ask help ensure that the public gets what it needs while also allowing platforms to profit based on giving what it predicts users want. [14/22]

These questions are, as we know, politically fraught. Oddly, the politics around recommendation and the politics around content moderation are sometimes at cross purposes. The U.S. political right has pushed back against platform efforts to expand content moderation, suggesting that they are politically biased. Simultaneously, they also want platforms to be more responsible for what they recommend (or at least need to say that as justification to roll back Section 230 protections). [15/22]

But moderation and recommendation aren’t separate, they work in tandem: platforms avoid recommending content mostly by removing what they deem reprehensible or dangerous. The ISIS videos that the plaintiffs in <i>Gonzalez v. Google</i> object to would not have been recommended if they’d been more diligently removed. So, that the more we demand that content remain online because of its speech rights, the more often it will be recommended, even by an algorithm designed in good faith. [16/22]

If Congress makes it so that Section 230 no longer protects recommendation, platforms are very likely to remove way more content altogether, as well as more drastically reducing what they’re willing recommend - which they do already. Not recommending content that is otherwise there to be seen is exactly what conservatives rail against, mistakenly called “shadowbanning” - but it is the only logical response from platforms if the Court finds for the plaintiffs in this case. [17/22]

Neither of these outcomes are particularly helpful if what we’re actually trying to address is the aggregate harms of information that we’re not willing to simply prohibit. Instead of hoping to do so by extending or curtailing 230, we need to look back to a well-worn, century-long discussion: how to get a media ecosystem, largely or entirely driven by market imperatives, to also serve the public interest? [18/22]

This is something we never solved with traditional media, but our efforts involved setting specific obligations about education, about children, about balance, about incentives towards quality programming, etc. This may sound antiquated, but it is a problem we have always faced, and we face again with social media. [19/22]

The part that’s new, perhaps, is that we also have to figure out our societal tolerance for error. Content moderation, even when performed in good faith, can never be perfectly executed. At this scale, even sophisticated detection software removes some content it shouldn’t, and overlooks some that it should remove; we ask too many people to do too difficult a job with too little support, and as such the standards will invariably be applied inconsistently. [20/22]

If content moderation is imperfect, then what gets recommended will also occasionally include the reprehensible, the harmful, or the illegal. Even if they were applied consistently, we do not agree on the standards; and people are ingenious when it comes to testing and eluding these governance mechanisms. [21/22]

@tarleton

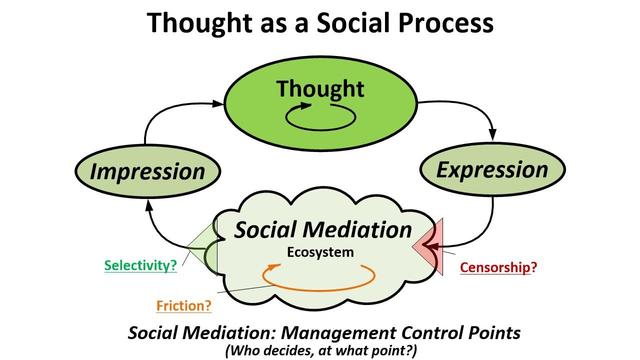

There is an answer to this apparent dilemma that is conceptually simple and well founded, but will take time and effort to perfect, given how far down the wrong road we have gone. "Middleware' (perhaps better called "feedware") that puts users and their communities in control of their feeds. That would restore the "Freedom of IMpression" that we applied for centuries to apply richly democratic social mediation and individual agency. As explained at https://techpolicy.press/from-freedom-of-speech-and-reach-to-freedom-of-expression-and-impression/

There is an answer to this apparent dilemma that is conceptually simple and well founded, but will take time and effort to perfect, given how far down the wrong road we have gone. "Middleware' (perhaps better called "feedware") that puts users and their communities in control of their feeds. That would restore the "Freedom of IMpression" that we applied for centuries to apply richly democratic social mediation and individual agency. As explained at https://techpolicy.press/from-freedom-of-speech-and-reach-to-freedom-of-expression-and-impression/

@tarleton Also you are the right scholar for this convo, as you remind us that these written protections WERE initially rooted in the technologies of broadcast at the time, and therefore CAN be amended to accommodate changing technical capabilities and media landscape over time.

@tarleton It's the "good faith" aspect that kills this discussion. The platforms boost offending content to boost their revenue. That's not good faith.

@tarleton @jeffjarvis We should outlaw roads, because some vehicles are transporting porn. Who will think of the children???

@hagbard @tarleton @jeffjarvis

The road builders and toll admins are, in this case, creating a fast lane for transporting harmful content. So, yeah, think of the children.

@tarleton It seems like if they can get the gradient right from an ISP to a search engine to a recommendation system, they could land on quite a result here. Gorsuch going out of his way to say that GPT like things aren't search engines was especially telling for me.

@danfaltesek I like how you are thinking of them as a gradient, and I agree that we can decide where to draw a line, past which there should be some responsibility for the provider when content is harmful. I guess I don't want it to be in the Court's read of 230, because I'd prefer a different conversation altogether, about aggregate harms to the public rather than individual harms to a user. Feels to me like that can't be framed as a 230 update.

@tarleton Aggregate public harms are hard and often not compatible with a theory of concrete and specific injury that produces standing (thus congress). Gorsuch went out of his way to say that LLMs are not protected by 230, Google's best argument by page 140 is that 230 should protect search engines and that YT is a search engine. It seems like the justices want ICS to include Search but are also clear that 230 isn't everything online.

@tarleton

an excellent thread, thank you!

an excellent thread, thank you!