Simon Willison (@[email protected])

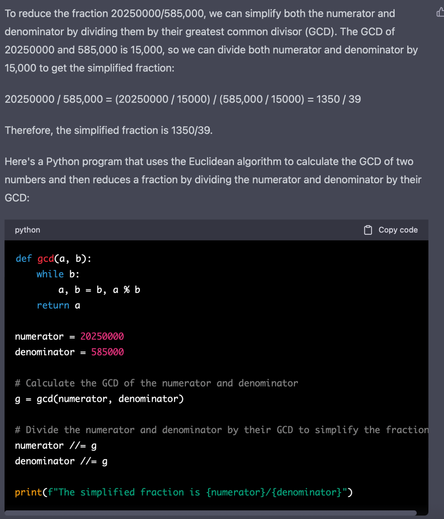

Wow.. while we were all making fun of Google's Bard demo for making some small mistakes about the James Webb Space Telescope, it turns out the Bing demo was wildly hallucinating made up financial comparisons between Gap and Lululemon! https://dkb.blog/p/bing-ai-cant-be-trusted