Happy to share my paper on Learning to Exploit Elastic Actuators for Quadruped Locomotion.

We learn to trot/pronk in only 10 minutes, directly on the real robot 🐈.

Paper: https://arxiv.org/abs/2209.07171

#RL #reinforcementlearning #reinforcement #learning #robot #robots #locomotion

Learning to Exploit Elastic Actuators for Quadruped Locomotion

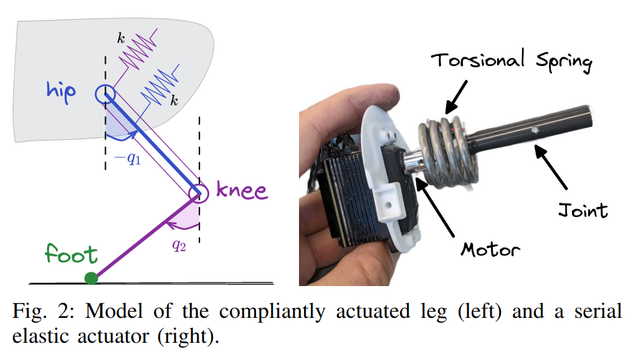

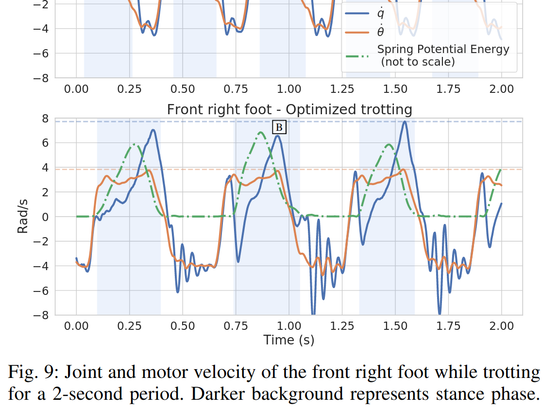

Spring-based actuators in legged locomotion provide energy-efficiency and improved performance, but increase the difficulty of controller design. While previous work has focused on extensive modeling and simulation to find optimal controllers for such systems, we propose to learn model-free controllers directly on the real robot. In our approach, gaits are first synthesized by central pattern generators (CPGs), whose parameters are optimized to quickly obtain an open-loop controller that achieves efficient locomotion. Then, to make this controller more robust and further improve the performance, we use reinforcement learning to close the loop, to learn corrective actions on top of the CPGs. We evaluate the proposed approach on the DLR elastic quadruped bert. Our results in learning trotting and pronking gaits show that exploitation of the spring actuator dynamics emerges naturally from optimizing for dynamic motions, yielding high-performing locomotion, particularly the fastest walking gait recorded on bert, despite being model-free. The whole process takes no more than 1.5 hours on the real robot and results in natural-looking gaits.