Not of the admins block it with robots.txt

@timbray @codeyarns not so fast! If my post federates to another instance that doesn't block search with robots.txt, then it 's still likely to wind up in a search engine (even if I've selected the "opt out of search engines" option). I've even found federated versions of deleted posts via search engines.

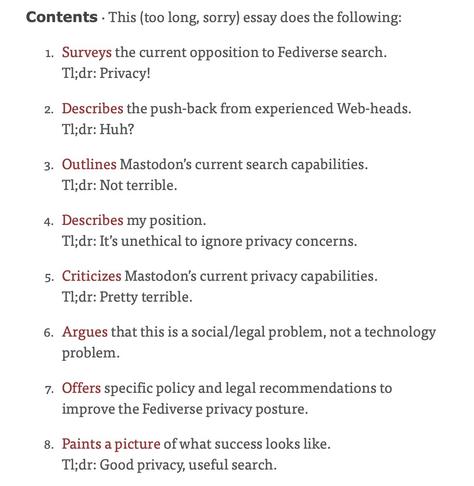

As you say, Mastodon's privacy story has problems.

The frustrating thing is, local-only toots help a lot here ... but they've been blocked from the main branch since 2017

If your post carries clear licensing restrictions, there's then a legal club available to beat anyone who misuses it. That's my piece's central point.

@mc @timbray @codeyarns yeah. i think of it as basically a prototype at scale of a decentralized system, so there are a lot of issues related to decentralized moderation that aren't yet addressed.

It's hard to know about the privacy model in general. For search in particular, opt-in could have worked if carried through the whole code, and I think people would have been okay with it. But that's not how things played out and it seems hard to retrofit.