I know quote tweets aren't a thing here but I feel strongly about this, so here goes!

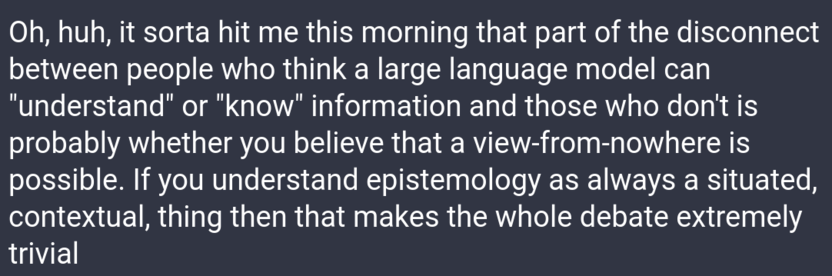

It makes me sad to see the below take pop up so often. Because they're trained directly on data and adopt the perspective of those data, the epistemology of ML/AI/LLM is, IMO, perfectly aligned with the situated knowledges perspective. Feminists, this is actually *our* moment to shine! We can do so much with these methods! We can absolutely use these methods in a way that aligns with a #feminist epistemology.