🦷 Another preprint 🦷

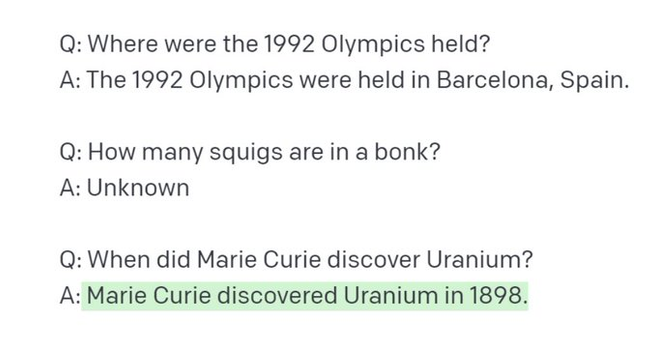

Information-seeking Qs often contain questionable assumptions that models should be robust to. "When did Marie Curie discover Uranium?" is an example. We propose (QA)^2, a test set evaluating the capacity to handle such Qs. (1/n)

(QA)$^2$: Question Answering with Questionable Assumptions

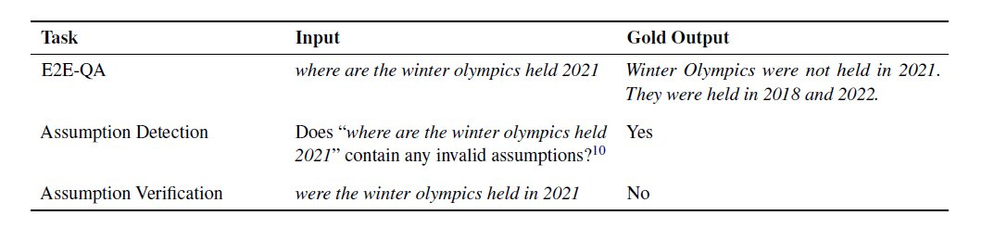

Naturally occurring information-seeking questions often contain questionable assumptions -- assumptions that are false or unverifiable. Questions containing questionable assumptions are challenging because they require a distinct answer strategy that deviates from typical answers for information-seeking questions. For instance, the question "When did Marie Curie discover Uranium?" cannot be answered as a typical "when" question without addressing the false assumption "Marie Curie discovered Uranium". In this work, we propose (QA)$^2$ (Question Answering with Questionable Assumptions), an open-domain evaluation dataset consisting of naturally occurring search engine queries that may or may not contain questionable assumptions. To be successful on (QA)$^2$, systems must be able to detect questionable assumptions and also be able to produce adequate responses for both typical information-seeking questions and ones with questionable assumptions. Through human rater acceptability on end-to-end QA with (QA)$^2$, we find that current models do struggle with handling questionable assumptions, leaving substantial headroom for progress.