Users engaged with natural language systems can provide feedback in realtime, and this feedback is a super duper learning signal! So: deploy, train, repeat!

https://arxiv.org/abs/2212.09710

Last PhD paper w/@alsuhr/[email protected] ... 🧵

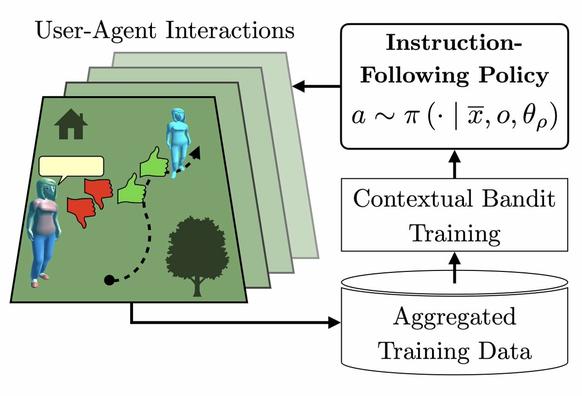

Continual Learning for Instruction Following from Realtime Feedback

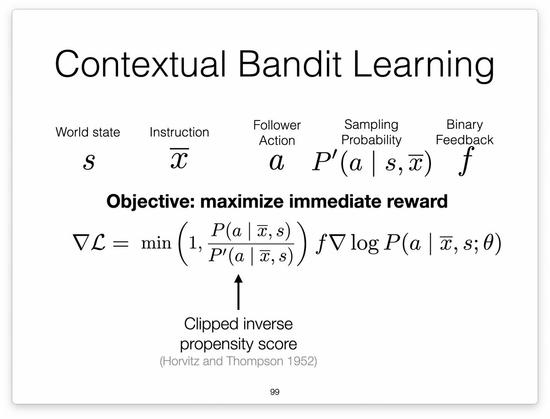

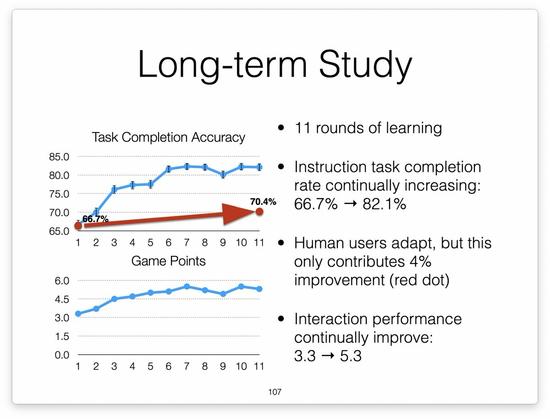

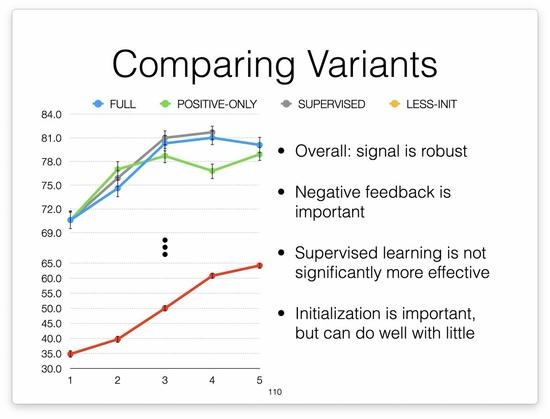

We propose and deploy an approach to continually train an instruction-following agent from feedback provided by users during collaborative interactions. During interaction, human users instruct an agent using natural language, and provide realtime binary feedback as they observe the agent following their instructions. We design a contextual bandit learning approach, converting user feedback to immediate reward. We evaluate through thousands of human-agent interactions, demonstrating 15.4% absolute improvement in instruction execution accuracy over time. We also show our approach is robust to several design variations, and that the feedback signal is roughly equivalent to the learning signal of supervised demonstration data.