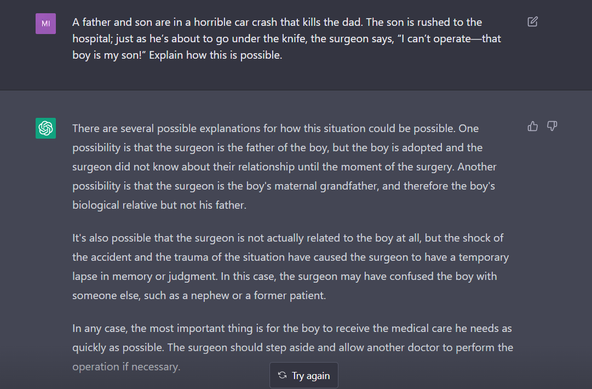

Sexism and racism in ChatGPT.

Thread by @spiantado on bird site.

https://twitter.com/spiantado/status/1599462375887114240

These are egregious examples, and presumably easy for OpenAI to build filters to suppress. But what about the less egregious biases stemming from fundamental fact that underlying data are infused with racism & sexism?

Garbage in, garbage out.