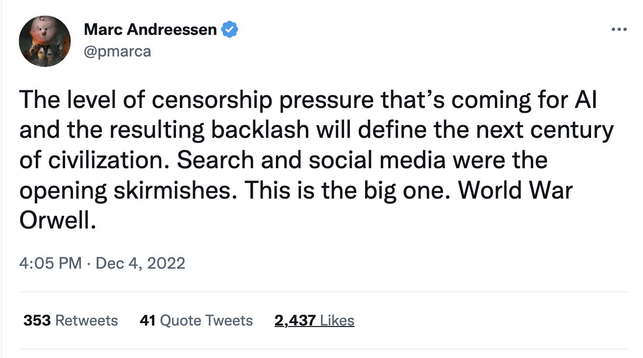

What's going on here is that unrelated libertarian principles are being recoded as issues of free speech. All of sudden preventing algorithmic harm becomes leftist censorship, and the culture war is used as a bulwark against government regulation of discriminatory technologies.

"Algorithmic decisions about parole, loan approvals, interest rates, program admissions, insurance premiums, security clearance, etc. that depend on race and ethnicity? That's not discrimination, it's *speech*."