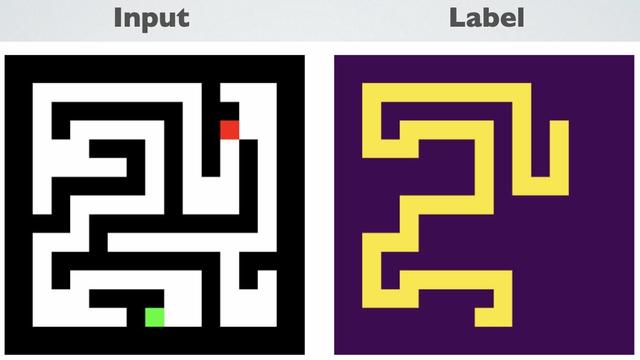

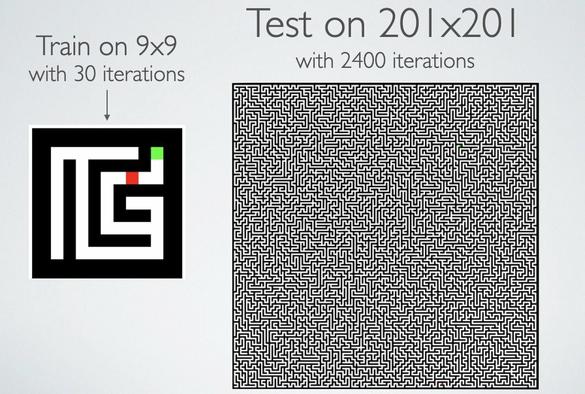

If we test the same network on a larger maze it totally fails. The network memorized *what* maze solutions look like, but it didn’t learn *how* to solve mazes.

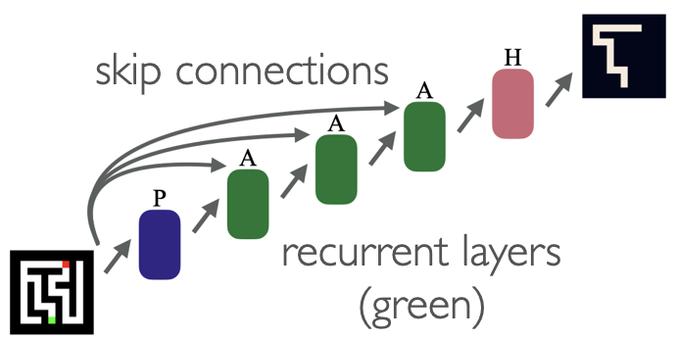

We can make the model synthesize a scalable maze-solving algorithm just by changing its architecture...

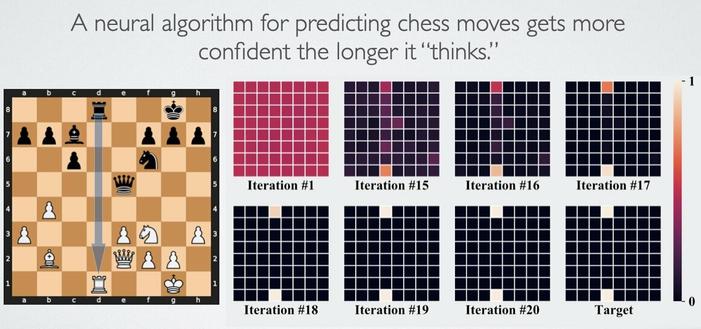

Networks can also learn algorithms for chess and prefix sum computation. For all these problems we use the same network and training loop, but different training data.

See you all on thurs afternoon at

@NeuripsConf

End-to-end Algorithm Synthesis with Recurrent Networks: Logical Extrapolation Without Overthinking

Machine learning systems perform well on pattern matching tasks, but their ability to perform algorithmic or logical reasoning is not well understood. One important reasoning capability is algorithmic extrapolation, in which models trained only on small/simple reasoning problems can synthesize complex strategies for large/complex problems at test time. Algorithmic extrapolation can be achieved through recurrent systems, which can be iterated many times to solve difficult reasoning problems. We observe that this approach fails to scale to highly complex problems because behavior degenerates when many iterations are applied -- an issue we refer to as "overthinking." We propose a recall architecture that keeps an explicit copy of the problem instance in memory so that it cannot be forgotten. We also employ a progressive training routine that prevents the model from learning behaviors that are specific to iteration number and instead pushes it to learn behaviors that can be repeated indefinitely. These innovations prevent the overthinking problem, and enable recurrent systems to solve extremely hard extrapolation tasks.