New paper 🚨 https://arxiv.org/abs/2211.09260

Can we train a single search system that satisfies our diverse information needs?

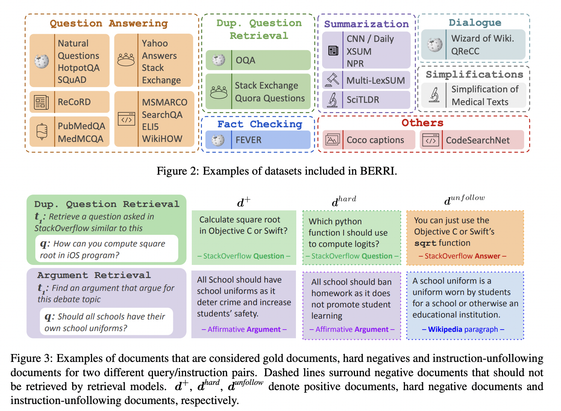

We present 𝕋𝔸ℝ𝕋 🥧 the first multi-task instruction-following retriever trained on 𝔹𝔼ℝℝ𝕀 🫐, a collections of 40 retrieval tasks with instructions! 1/N

Task-aware Retrieval with Instructions

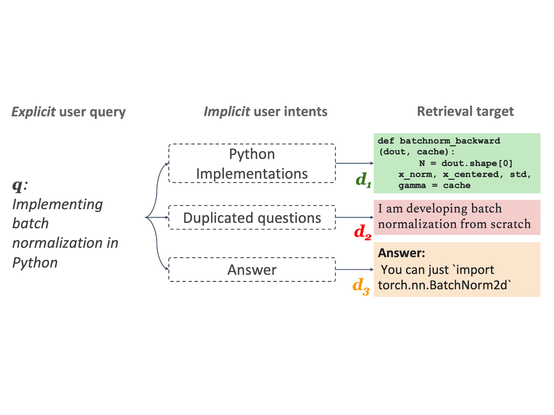

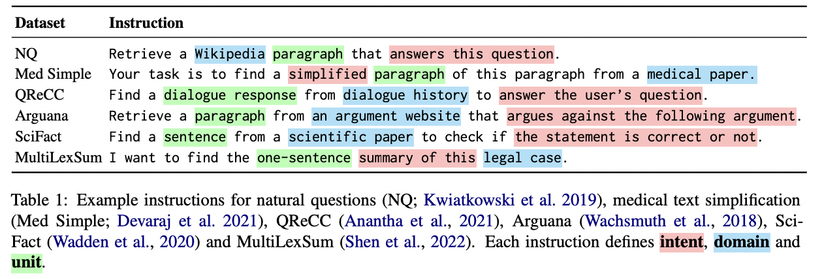

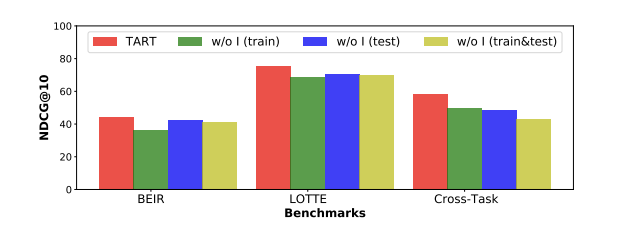

We study the problem of retrieval with instructions, where users of a retrieval system explicitly describe their intent along with their queries, making the system task-aware. We aim to develop a general-purpose task-aware retrieval systems using multi-task instruction tuning that can follow human-written instructions to find the best documents for a given query. To this end, we introduce the first large-scale collection of approximately 40 retrieval datasets with instructions, and present TART, a multi-task retrieval system trained on the diverse retrieval tasks with instructions. TART shows strong capabilities to adapt to a new task via instructions and advances the state of the art on two zero-shot retrieval benchmarks, BEIR and LOTTE, outperforming models up to three times larger. We further introduce a new evaluation setup to better reflect real-world scenarios, pooling diverse documents and tasks. In this setup, TART significantly outperforms competitive baselines, further demonstrating the effectiveness of guiding retrieval with instructions.