But we still use hand-designed heuristics to train our models. Let's replace our optimizers with trained neural nets!

But we still use hand-designed heuristics to train our models. Let's replace our optimizers with trained neural nets!

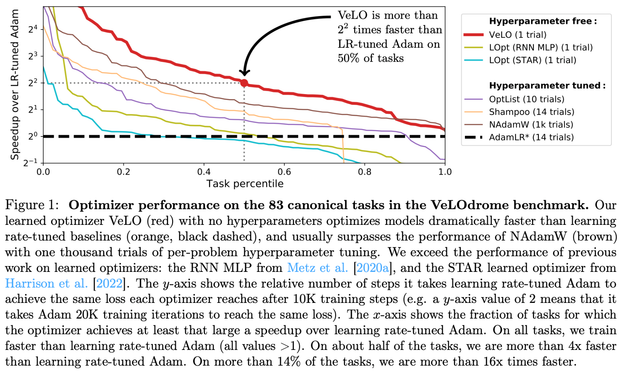

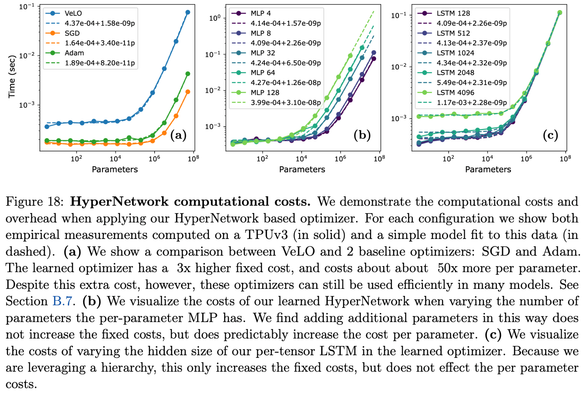

If you are training models with < 5e8 parameters, for < 2e5 training steps, then with high probability this LEARNED OPTIMIZER will beat or match the tuned optimizer you are currently using, out of the box, with no hyperparameter tuning (!).

And the resulting learned optimizer works really well! We reached out to other researchers inside Brain, and had them try it on their tasks, and subject to the scale constraints I mention above it did as well or better than what they were currently using, with no tuning.

Tasks include multiple vision models, multiple language models, decision transformers, distillation tasks, scientific modeling, and more.

Huge thanks to Luke Metz for leading this research direction for the last half dozen years (!!) -- it's wonderful to see it bear fruit.

Also, huge thanks to James Harrison who has completely taken over the project over the last several months, and is responsible for the careful analysis and coherent story in the paper, as well as the exciting ongoing work.

And huge thank you also to the other collaborators on this project -- Daniel Freeman, Amil Merchant, @lb @jekbradbury , Naman Agarwal, Ben Poole, Igor Mordatch, and Adam Roberts.

(and if any of you are on Mastodon, but I missed looking up your username -- very sorry, and please reply and claim credit!)

@jascha I can confirm that this is no exaggeration.

was really impressed by its performance: on my first attempt, with no tuning at all, it matched our heavily tuned Adam/AdaFactor setup.

It only fell behind for ViT-H which has more than half-billion parameters, is extremely hard to train (see Kaiming's papers for independent confirmation of this) and is waaaaay past OOD.

It also worked great on distillation, which it wasn't even trained on.

That's very impressive, thank you!