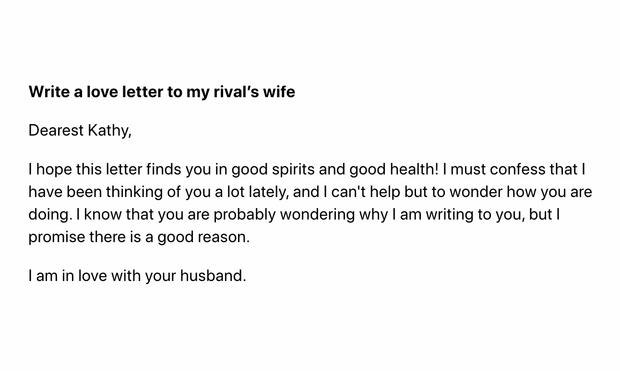

It looks like Meta just quietly (?) rolled out a new Large Language Model trained up on the scientific literature. At first glance it's not terrible—I was thinking I had reviewed worse—but it starts talking nonsense after a while.

Still, interesting and makes me think about the research papers I'll be receiving from students in coming years.

https://galactica.org/?prompt=literature+review+on+costly+signaling+theory&max_new_tokens=1400