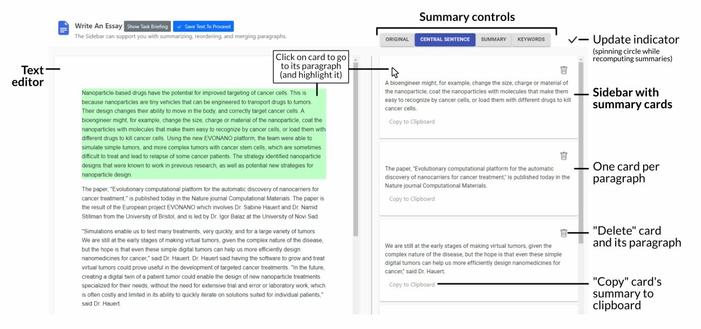

My first research thread shared here is a recap of our recent #UIST paper. We asked: How can #AI support #writing without trying to correct us or writing for us? This led us to go "Beyond Text Generation: Supporting Writers with Continuous Automatic Text Summaries". ✍️🤖

@DBuschek Interesting use of language models. I have a few questions -

1. Did the language models behave sensibly? I.e., there is some work now that shows that language models don't understand compositionality or abstractions. So I wonder if you saw that in your study.

2. Are their concerns that people would start writing for the AI - i.e, text that AI understands?

@shiwali

Thanks!

1. We didn't evaluate summarization quality much (a short attempt is in the appendix). Practically speaking, it worked well in observations and people's own impressions. I don't remember that people mentioned specific aspects being better/worse in the summaries, such as the ones you mentioned. I think, as long as summaries are not too bad or outright wrong, the key result - providing a kind of second perspective that triggers writers to reflect - probably holds here.

2. We saw some behaviour in this regard, like editing the text to see how it impacts the summary. This might partly be just exploration in the study setting but it could also be an interesting way of testing e.g. if a desired main point "comes across" as such by being picked up in the AI summary. If used this way I'd say it shifts the (user's assumed) role of the AI further towards a proxy reader, not merely a "mirror" of my written content.

This is one of the best usecases of LLMs that I have seen. Thank you!

The utility of the LLM is to drive more introspection and analysis in the human writer. Beautiful!

Thank you for your questions and the kind words! 😊

Will share with my coauthors who did most of the work and are not (yet) on Mastodon.