Going to find out how deep learning works for Information Retrieval with Dacheng Tao. #SIGIR2020

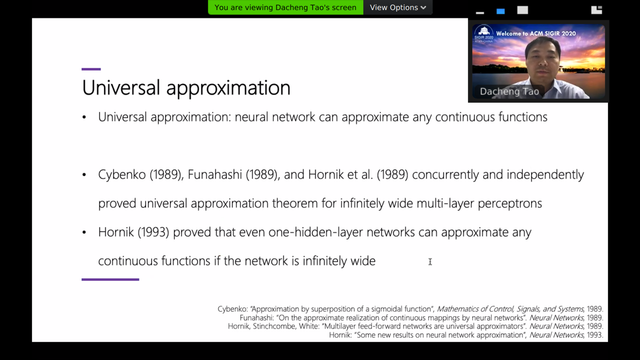

Why do we need deep learning, when neural nets can already approximate any function?

@djoerd Does the speaker give an answer to this question or is his message that neural networks are enough for everything?

@scolobb The speaker discussed why deep neural networks are overparameterized (the number of parameters often exceeds the amount of training data) but still give excellent results.

@scolobb Conclusion: they do not really know, but let's find out!

@scolobb One would expect over-training at some point: excellent performance on the training data, but bad performance on the test data.

@scolobb My guess would be that deep neural networks use *alternative* parameters on every layer, that do not compete for finding the best fit. Does BERT really need 12 layers? Theory suggests it does not...

@djoerd I don't really understand the idea or alternative parameters that don't compete. It would mean redundancy, right? This plays well with the idea that some nets don't seem to need all of their layers.