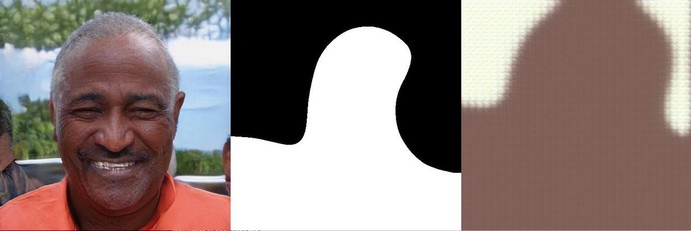

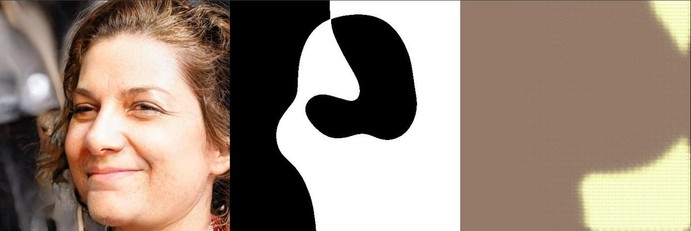

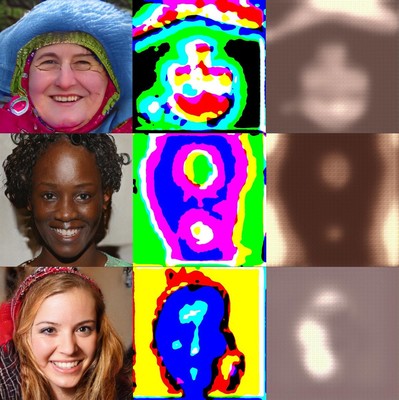

It might look like a very bad GAN but I am quite astonished that it worked at all:

These are two GANs that try to invent their own "language". Both of them learn only from each other, the center image is part of how model A encodes the input, on the right is model B's output.