Bayesian-calibrated global sen...

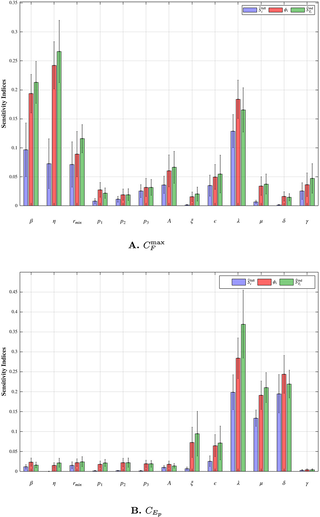

Bayesian-calibrated global sensitivity analysis for mathematical models using generative AI

Author summary In this research, we introduce a novel approach for conducting global sensitivity analysis in biological models using generative AI. Our method is fully compatible with Bayesian inference, which is widely used for parameter calibration of biological systems. Unlike traditional sensitivity analyses that assume independent parameters or impose simplified dependence structures, our approach performs sensitivity analysis directly on Bayesian-calibrated posterior distributions, where parameter correlations are learned from observational data. As a result, the resulting sensitivity analysis reflects realistic, data relevant parameter sensitivities rather than purely structural sensitivities of an abstract model. The proposed framework is flexible, scalable, and broadly applicable to a wide range of deterministic models calibrated through Bayesian methods. Furthermore, the generative nature of the approach paves the way for future extensions to distributional sensitivity analysis in stochastic or agent-based models, enhancing its potential for modern biological applications.

Robust Reinforcement Learning ...

ieeexplore.ieee.org/document/11428...

On consciousness in animals an...

Learning collision risk proact...

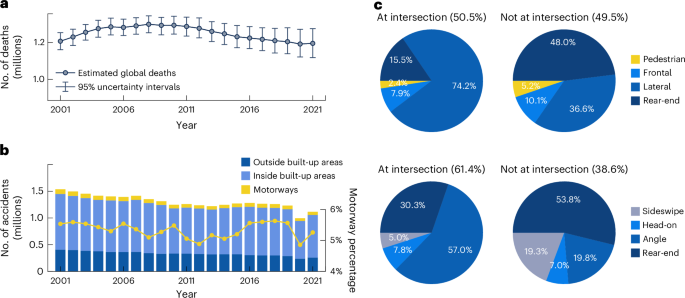

Learning collision risk proactively from naturalistic driving data at scale - Nature Machine Intelligence

Jiao et al. introduce a generalized safety measure for autonomous driving systems that learns collision risk from everyday driving without labels. It accurately warns in real time of crashes and near-crashes and secures time for an early reaction.

Neural Tuning for Ordinal Proc...

Neural Tuning for Ordinal Processing: Convergent Patterns in Human Brains and Artificial Networks

Processing ordinality, i.e., the rank of an item in a series such as 1st, 2nd, 3rd, etc., is a fundamental skill shared by humans and animals. While humans often use symbolic sequences like numbers or letters, ordinality does not depend on language or symbols. Across species, ordinality plays a critical role in behaviors such as decision-making, foraging, and social organization. We hypothesize that ordinality perception is supported by neuronal tuning, i.e., neurons selectively responsive to specific ranks. Using ultrahigh-field 7 T fMRI and population receptive field (pRF) modeling in human participants (both female and male), we identified neural populations in parietal and premotor cortices that are tuned to nonsymbolic ordinal positions. Comparable with other sensory domains, tuning width increased with preferred ordinal rank, suggesting reduced precision and potentially lower perceptual accuracy for higher ranks. Additionally, pRF measurements revealed that cortical territory devoted to higher ordinalities decreased with rank, reinforcing that neural precision is greatest for early positions (e.g., 1st and 2nd) and declines with rank. These responses did not generalize to symbolic ordinality. Similar tuning to nonsymbolic ordinality emerged spontaneously in hierarchical convolutional neural networks trained on visual tasks. Together, these results suggest that the tuning properties of these neuronal populations support nonsymbolic ordinality perception and may reflect an inherent feature of neural processing.

5 Minute Papers on AI for the ...

5 Minute Papers on AI for the Planet

Learn about how AI can be used to protect biodiversity, fight climate change, and just better understand our planet through 5-minute explainers covering academic papers on AI for the Planet. AI is not just chatbots! Grace Lindsay is a professor of Data Science & Psychology. She teaches a course on Machine Learning for Climate Change. ai.for.the.planet on socials

Ten simple rules for building ...

RE: https://bsky.app/profile/did:plc:op7eevqcejmhguigdma42vdp/post/3mcxxhobvik2d