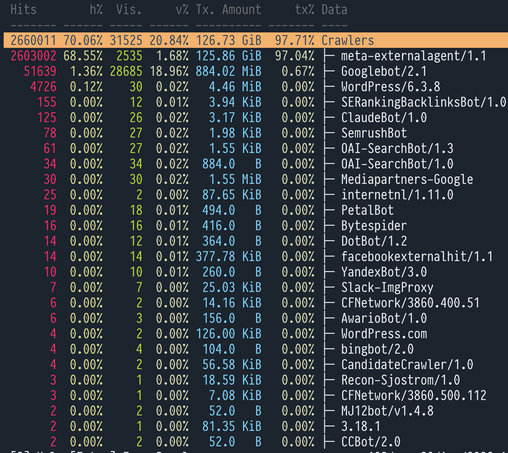

Noticed an uptick in (#ai) crawlers which ignore robots.txt that started to hog my server in a notable manner, so as a quick stop gap measure I’m blocking their user agents in #haproxy using https://github.com/ai-robots-txt/ai.robots.txt/ while I figure out how to bolt on #haphash https://github.com/dgl/haphash