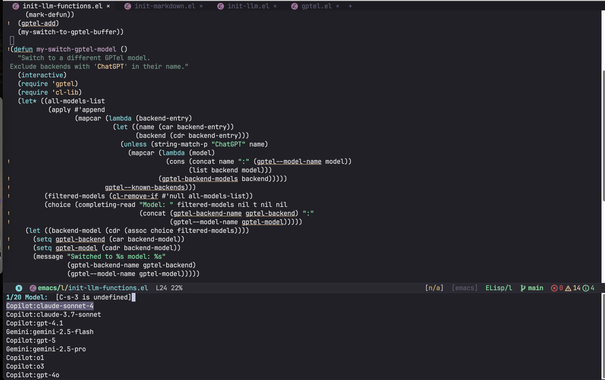

Having a local LLM gives me the peace of mind to do things like this. Using gptel-agent with Ollama running qwen3.5:27b:

So I was curious if it can do a simple speed test within Emacs. I then wanted the results in the same metrics that browsers use when downloading files, and it checks out. The model can call tools using gptel-agent, which is usually running Emacs Lisp, but it can also run bash. I wouldn't put this on making anything complex, but for simple web searching and doing fun things like this, it's surprisingly good.

Speed Test Results: 58 Mbps (7.2 MiB/s)

Download times:

• Web page (5MB): 0.7s

• Album (50MB): 7s

• HD movie (1GB): 2.3 min

• OS image (4GB): 9 min

• Large game (50GB): 1.4 hours