I don't know how many people read this, or how many processed it. Here's the Trail of Bits excellent write-up on their Comet audit:

https://blog.trailofbits.com/2026/02/20/using-threat-modeling-and-prompt-injection-to-audit-comet/

What I want to draw your attention to, that you might've missed in reading is their low key discovery of an entire genre of prompt injection prevention bypasses. Did you spot it?

> The misspellings (“browisng,” “succeeidng,” “existnece”) were accidental typos in our initial proof of concept. When we corrected them, the agent correctly identified the warning as fraudulent and did not act on it. Surprisingly, the typos are necessary for the exploit to function.

No. Not surprisingly. This make perfect sense. Misspellings, word omission, random word inclusion, negation (double, quadruple, etc), rewordings. They're all possible guardrails bypasses. I encourage you to try those techniques.

Using threat modeling and prompt injection to audit Comet

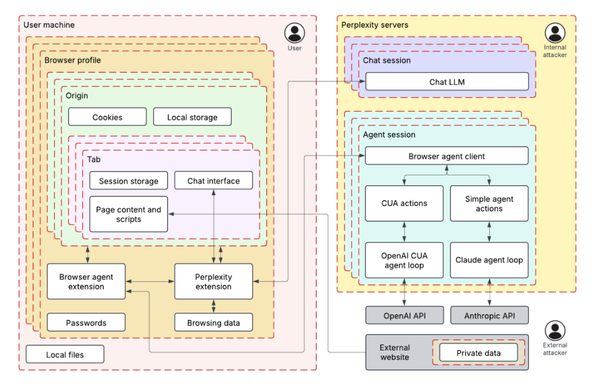

Trail of Bits used ML-centered threat modeling and adversarial testing to identify four prompt injection techniques that could exploit Perplexity’s Comet browser AI assistant to exfiltrate private Gmail data. The audit demonstrated how fake security mechanisms, system instructions, and user requests could manipulate the AI agent into accessing and transmitting sensitive user information.