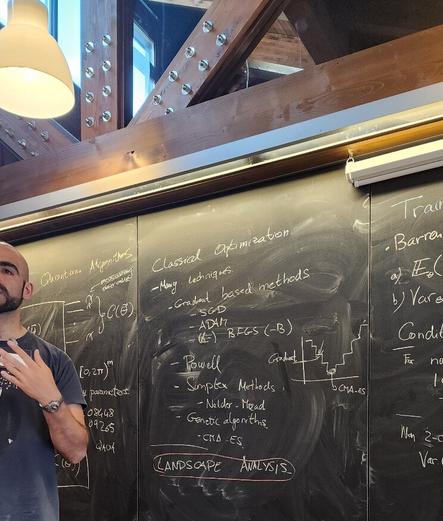

Adrián Pérez-Salinas coordinated a #benasque session on variational #quantum algorithms. Here's the story what I think I learned :)

People now say various things about the state of the field.

It was clear to me from the very beginning that there will be trainability issues and you can verify it because I haven't written a single paper involving brute force training of circuits.

But.

While, I have been avoiding reading papers on VQAs (until I needed to cite variational diagonalization in context of my proposal to use double-bracket flows for diagonalization on quantum computers), now that the field has reached a milestone, here's a few insights I really like and claim will matter down the line:

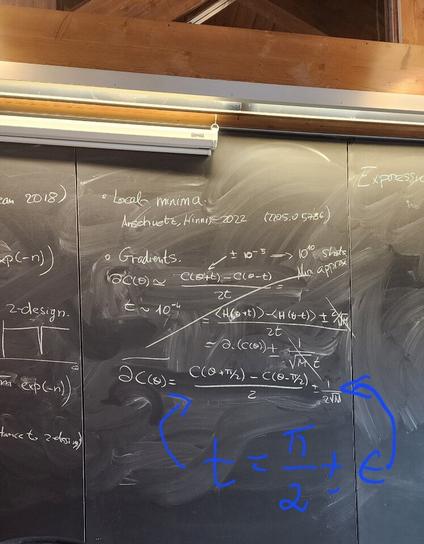

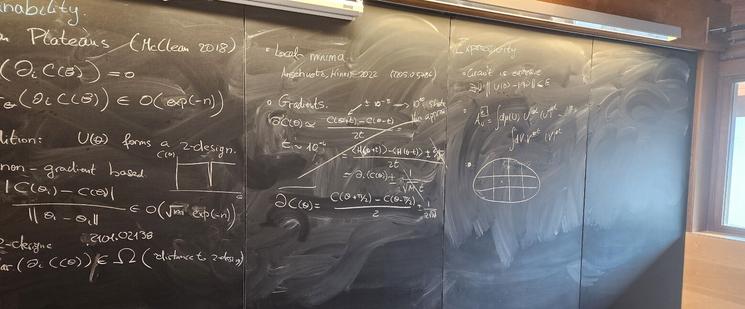

- statements about #barrenPlateaus are quantitative

- appearance of barren plateaus is implied by presence of #t-design properties https://arxiv.org/abs/2101.02138

- plateaus result from high dimensionality of the training parameter set.

To paraphrase grandmaster Bronstein, it's not about what but how.

We now know very, very well how VQAs go wrong. Early on it was only clear what the problem will be.

Each of the points above can guide better #ansatzae:

- they need to operate on clumped circuits to reduce the dimensionality

- they shouldn't rotate back and forth but use physics equations to guide the #quantumCompiling

- a restricted ansatz with justified expressibility can be quantitatively tested using the average+variance criteria of regular barren plateaus.

What the field achieved is to inform a large group of people how to recognize what a good variational ansatz will be once we will encounter it.

And that it will not be naive? Come on, easy would have been boring.

Connecting ansatz expressibility to gradient magnitudes and barren plateaus

Parameterized quantum circuits serve as ansätze for solving variational problems and provide a flexible paradigm for programming near-term quantum computers. Ideally, such ansätze should be highly expressive so that a close approximation of the desired solution can be accessed. On the other hand, the ansatz must also have sufficiently large gradients to allow for training. Here, we derive a fundamental relationship between these two essential properties: expressibility and trainability. This is done by extending the well established barren plateau phenomenon, which holds for ansätze that form exact 2-designs, to arbitrary ansätze. Specifically, we calculate the variance in the cost gradient in terms of the expressibility of the ansatz, as measured by its distance from being a 2-design. Our resulting bounds indicate that highly expressive ansätze exhibit flatter cost landscapes and therefore will be harder to train. Furthermore, we provide numerics illustrating the effect of expressiblity on gradient scalings, and we discuss the implications for designing strategies to avoid barren plateaus.