KittenTTS v0.8: three new models, smallest under 25 MB

Clanker Adjacent (my blog)

Clanker Adjacent (my blog) - Lemmy.World

Ola Elsewhere [https://codeberg.org/BobbyLLM/llama-conductor], I’ve been building a behaviour shaping harness for local LLMs. In the process of that, I thought “well, why not share what the voices inside your head are saying”. I hope that’s ok to do. With that energy in mind, may I present Clanker Adjacent (because apparently I sound like a clanker) thanks lemmy! [https://lemmy.world/post/43503268/22321124] There’s not much there yet but what there is may bring a wry smile. If reading long form stuff floats your boat, take a look. I promise the next post will be “Show me your 80085”. Share it if you like it. Clanker Adjacent [https://bobbyllm.github.io/llama-conductor/] PS: Not a drive by. I lurk here and get the shit kicked out of me over on /c/technology

Introducing Mistral Small 4 | Mistral AI

Introducing Mistral Small 4 | Mistral AI - Aussie Zone

Key architectural details Mixture of Experts (MoE): 128 experts, with 4 active per token, enabling efficient scaling and specialization. 119B total parameters, with 6B active parameters per token (8B including embedding and output layers). 256k context window, supporting long-form interactions and document analysis. Configurable reasoning effort: Toggle between fast, low-latency responses and deep, reasoning-intensive outputs. Native multimodality: Accepts both text and image inputs, unlocking use cases from document parsing to visual analysis.

LLM Architecture Gallery

Short Doco: How LLMs Took Over The World - Everything is a Pattern

I am wondering, is the path big AI corps are going with providing models via huge server farms quite opposing capitalism?

Normally costs run down over time (see solar or microchips). LLMs get smaller and suddenly they fit on your device.

I checked OVH cloud for their offerings of cloud models. They all fit on a 64gb strix halo, probably even 32gb ram. The SOTA models still have an edge, but honestly not much.

CanIRun.ai — Can your machine run AI models?

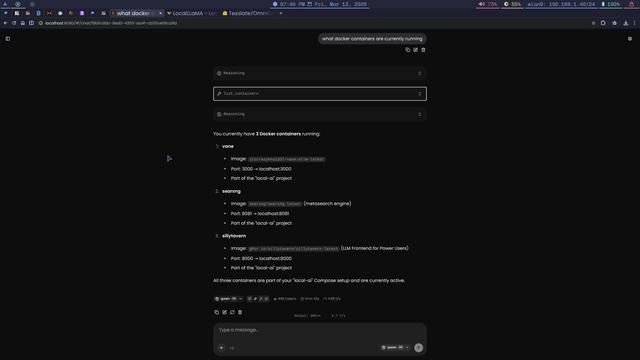

llama.cpp + mcp - docker and more

How to... (Maybe I am missing something)

How to... (Maybe I am missing something) - Down On The Street

Well, I run my own OpenWebUI with Ollama, installed with docker compose and running local on my home server with some NVIDIA GPU and I am pretty happy with the overall result. I have only installed local open source models like gptoss, deepseek-r1, llama (3.2, 4), qwen3… My use case is mostly ask questions on documentation for some development (details on programming language syntax and such). I have been running it for months now, and it come to my mind that it would be useful for the following tasts as well: - audio transcribing (voice messages to text) - image generation (logos, small art for my games and such) I fiddled a bit around, but got nowhere. How do you do that from the openwebui web interface? (I never used ollama directly, only through the openwebui GUI)

Guide to run Qwen3.5 locally