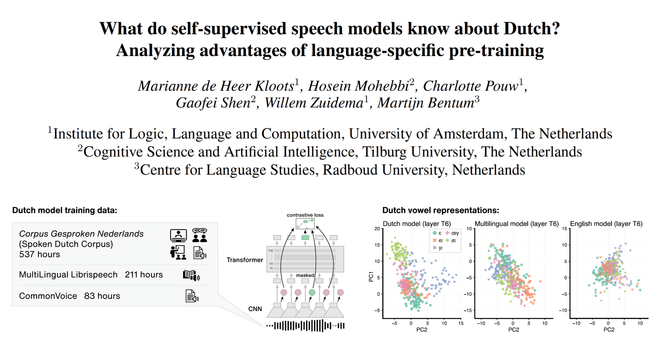

✨ Do self-supervised speech models learn to encode language-specific linguistic features from their training data, or only more language-general acoustic correlates?

At #Interspeech2025 we presented our new Wav2Vec2-NL model and SSL-NL evaluation dataset to test this!

📄 https://arxiv.org/abs/2506.00981

⬇️